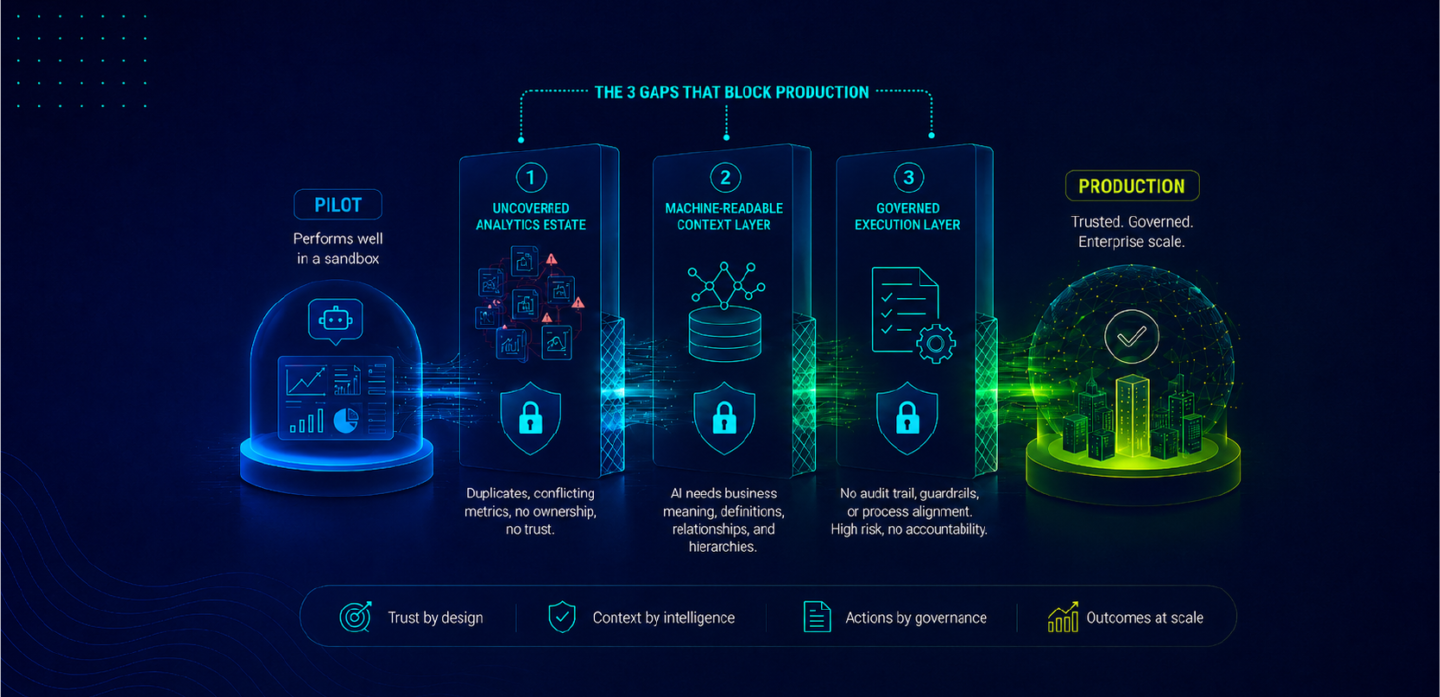

Enterprises are rapidly deploying AI copilots and agents across analytics environments. Data warehouses are connected, modern data stacks are in place, and model capabilities continue to improve. Yet, despite these investments, business users frequently report a lack of trust in AI-generated insights.

This challenge is often misattributed to model limitations or data quality issues. In reality, the root cause is more specific and structural. AI systems are able to interpret data at a technical level, but they lack the ability to understand how that data is used within business decision-making contexts.

An AI system can query a data warehouse and retrieve revenue figures. However, it cannot inherently determine which revenue dashboard is certified by Finance, which KPI definition is authoritative, or how that metric should be interpreted within a specific business scenario. This gap highlights a critical distinction between a data catalog and an analytics catalog. For enterprise AI, this distinction determines whether outputs are merely plausible or truly decision-ready.

What Each Catalog Actually Does

Data catalogs and analytics catalogs serve distinct but complementary roles within the enterprise data and analytics ecosystem. Understanding this distinction is essential for building AI-ready analytics infrastructure.

| Dimension | Data Catalog | Analytics Catalog |

| Scope | Data infrastructure | Analytics and decision layer |

| Assets governed | Tables, schemas, pipelines | Dashboards, reports, KPIs |

| Primary users | Data engineers, data scientists | Business users, analysts |

| Governance focus | Data quality, lineage, structure | KPI definitions, ownership, certification |

| AI relevance | Data access and structure | Business context and decision trust |

| Example tools | Atlan, Alation, Collibra | ZenOptics Atlas |

A data catalog governs the data layer, ensuring visibility into data lineage, schema structure, and data quality. An analytics catalog governs the decision layer, where business users interact with dashboards, reports, and KPIs.

While both layers are necessary, the absence of an analytics catalog creates a critical gap in AI readiness.

Where Data Catalogs Fall Short in AI-Driven Analytics

Data catalogs are designed to solve challenges at the data infrastructure level. They provide comprehensive visibility into tables, columns, transformations, and lineage, enabling data teams to manage and govern complex data ecosystems effectively.

However, enterprise AI use cases typically operate at the analytics layer, not the raw data layer. When a business user asks an AI copilot to analyze revenue performance or identify drivers of growth, the system must interpret business logic rather than just data structures.

In such scenarios, a data catalog cannot answer key questions:

- Which dashboard is the authoritative source for revenue reporting?

- How is “region” defined within the organization?

- Which KPI definition is aligned with Finance versus Sales?

- Has the metric been validated and certified?

A data catalog provides structural context, but it does not provide decision context. As a result, AI systems rely on statistical inference rather than governed business definitions, leading to outputs that may be technically correct but misaligned with enterprise decision-making standards.

This limitation is not resolved through improved models or prompt engineering. It requires a dedicated analytics layer that captures and governs business context.

The Role of the Analytics Catalog in AI Readiness

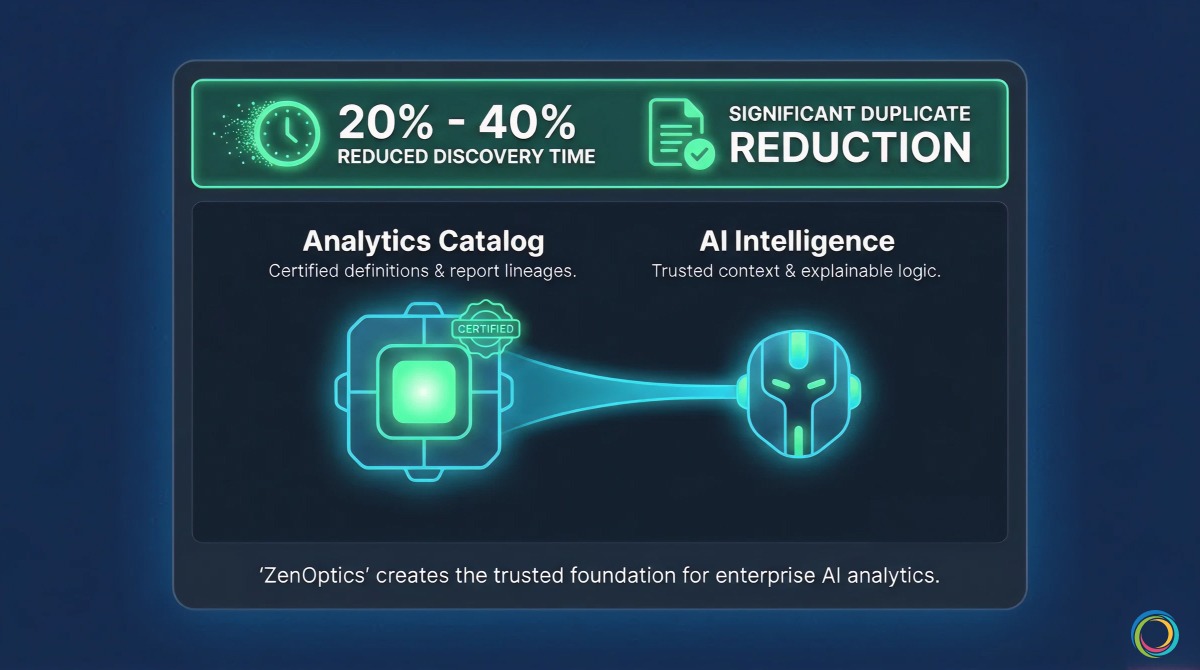

An analytics catalog addresses this gap by governing the assets that directly inform business decisions. It provides structured, machine-readable context that enables AI systems to align outputs with organizational definitions and standards.

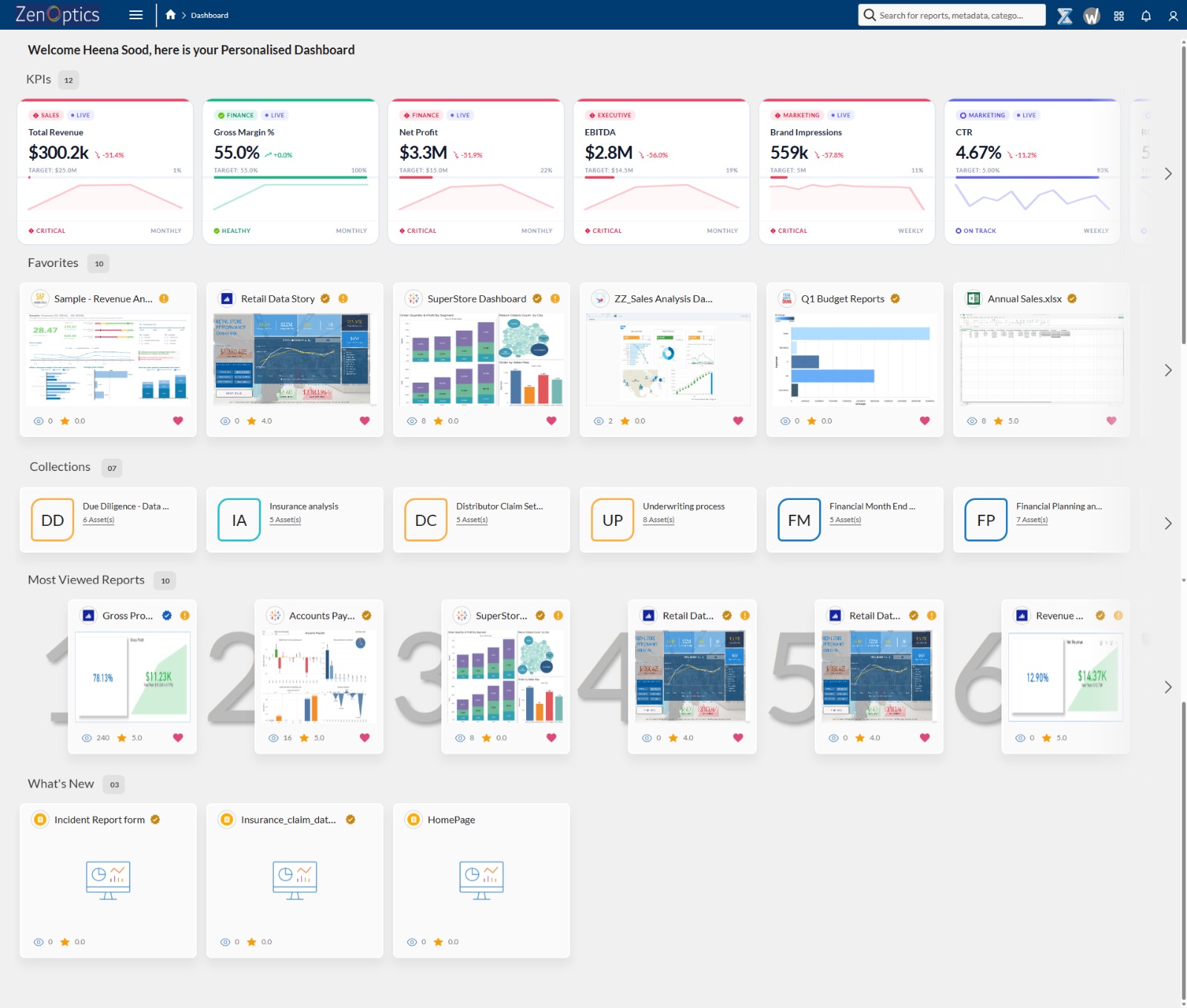

At ZenOptics, this capability is delivered through Atlas, which establishes an analytics system of record across all BI tools. Atlas catalogs dashboards, reports, and KPIs, while enabling certification, ownership assignment, and governance at scale.

This governed metadata is then transformed by Nexus into an AI-ready context layer. Nexus maps KPI definitions, aligns business terminology, and establishes relationships between metrics, allowing AI systems to interpret data within the correct business context.

Finally, Maestro governs how AI-driven insights are operationalized. It ensures that decisions are traceable, auditable, and aligned with approved workflows, introducing a layer of control and accountability essential for enterprise adoption.

Together, these layers enable a transition from data-driven outputs to decision intelligence.

Key Capabilities of an Analytics Catalog for AI

An analytics catalog provides several critical capabilities that directly impact AI performance and trustworthiness in enterprise environments.

First, it establishes certified metrics and KPI definitions. Each KPI is associated with a defined calculation, an owner, a certification status, and a revision history. This ensures that AI systems reference authoritative definitions rather than inferring meaning from raw data.

Second, it enables cross-platform lineage at the analytics layer. While data catalogs track data lineage, analytics catalogs track how data is consumed across dashboards and reports. This allows AI systems to understand downstream impact and maintain consistency across outputs.

Third, it incorporates usage and adoption signals. Frequently used and certified dashboards indicate trusted sources of truth. AI systems can prioritize these assets when generating responses, aligning outputs with actual business usage patterns.

Fourth, it captures business taxonomy and organizational context. Concepts such as region, product hierarchy, and sales channel are defined at the analytics layer. By making this context machine-readable, analytics catalogs enable AI systems to interpret queries in alignment with how the organization operates.

These capabilities collectively enable what can be described as analytics-specific AI governance.

Why Enterprises Need Both Data and Analytics Catalogs

Data catalogs and analytics catalogs are not competing solutions; they address different layers of the analytics stack.

The data catalog governs the foundation, ensuring that data is accurate, traceable, and well-structured. The analytics catalog governs the decision layer, ensuring that insights are consistent, trusted, and aligned with business definitions.

Enterprises that rely solely on data catalogs often encounter a recurring issue: AI systems generate technically accurate responses that do not align with business expectations. This occurs because the AI lacks visibility into which metrics and dashboards are considered authoritative.

By integrating both layers data governance and analytics governance organizations can enable AI systems to operate with both structural and semantic understanding.

For a broader perspective on how this fits into enterprise AI readiness, see:

From BI Metadata to AI-Ready Intelligence

Five Signs Your Organization Has an Analytics Catalog Gap

Several indicators suggest that an organization lacks a governed analytics layer.

First, reliance on manual lists of “official dashboards” indicates the absence of a centralized system of record. Second, inconsistent KPI values across reports highlight misalignment in metric definitions. Third, an inability for AI systems to identify trusted sources reflects a lack of structured analytics context. Fourth, limited visibility into certified dashboards across tools suggests fragmented governance. Finally, recurring questions about which dashboard to trust indicate systemic gaps in analytics governance.

These challenges are not isolated issues but symptoms of an incomplete analytics infrastructure.

Frequently Asked Questions

What is the difference between a data catalog and an analytics catalog?

A data catalog governs data infrastructure, including tables, schemas, and pipelines. An analytics catalog governs dashboards, KPIs, and metrics used for business decision-making. Both are essential for AI readiness.

Can a data catalog provide business context for AI?

A data catalog provides structural context but does not capture business definitions, ownership, or certification. These elements are managed within an analytics catalog.

Why do AI systems generate incorrect business insights despite high-quality data?

Because they lack access to structured analytics context. Without certified KPI definitions and relationships, AI systems rely on statistical inference rather than governed business logic.

What is a certified dashboard?

A certified dashboard is one that has been validated and approved by a designated business owner. It includes clear definitions, ownership, and revision history, making it a trusted source for decision-making.

How does ZenOptics Atlas differ from traditional data catalog tools?

ZenOptics Atlas is designed for the analytics layer, focusing on dashboards, KPIs, and reports. It complements data catalog tools by governing the decision layer and enabling AI-ready analytics.

What is analytics-specific AI governance?

It refers to governing analytics assets—KPIs, dashboards, and business definitions—before deploying AI, ensuring that AI outputs align with enterprise decision-making standards.