Analytics AI Time to Value Has Become the Measurement of Program Health

Analytics AI time to value has become the measurement every enterprise program is being judged against in 2026. The demo worked in thirty seconds. The pilot worked in three weeks. Production is somewhere between "still in QA" and "two quarters behind plan," and leadership is asking the CIO why.

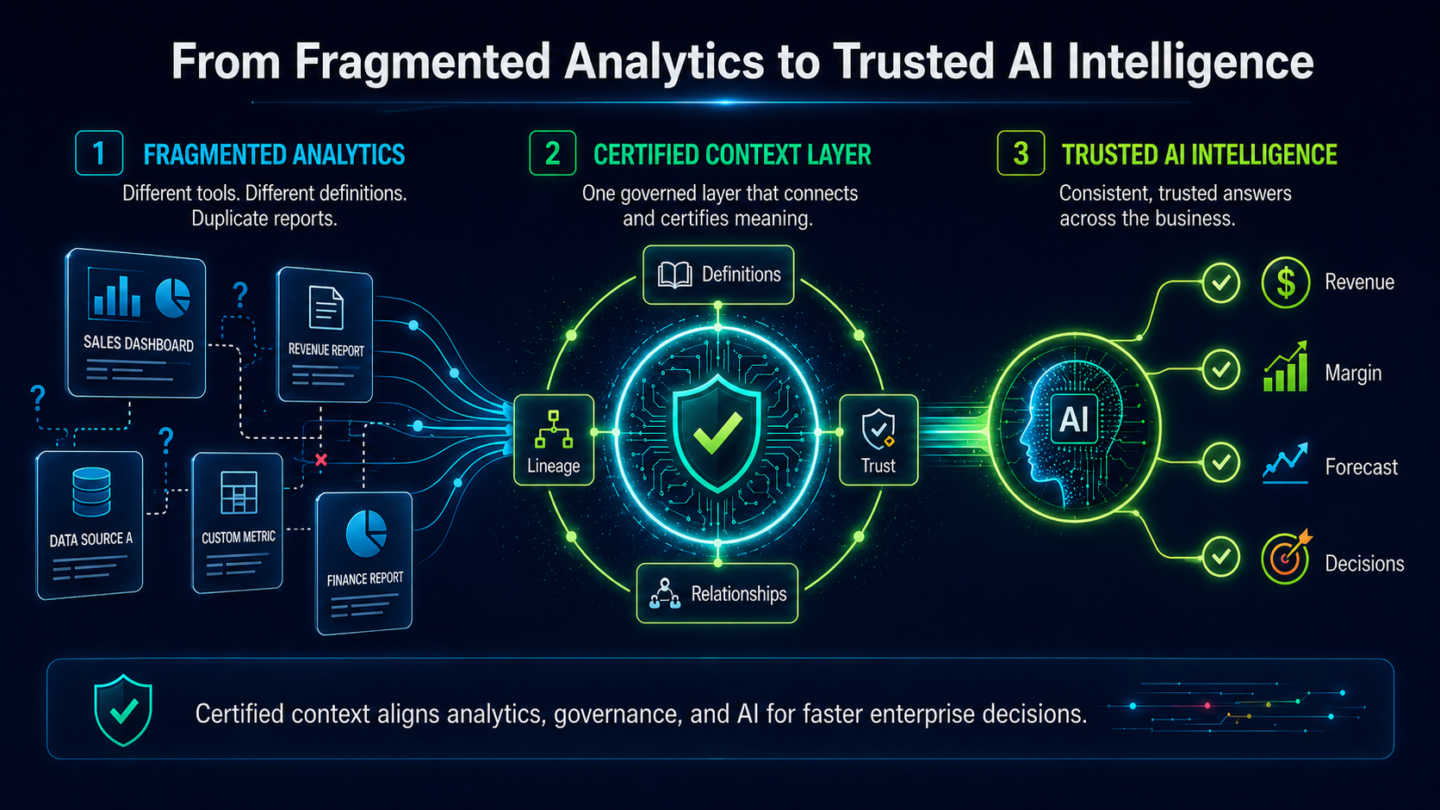

The question is reasonable. The answer most teams give does not hold up under a second question. "The data is messy." "The model needs tuning." "We need to fine-tune on our reports." Each of these frames analytics AI time to value as a technical pacing problem. It is not. It is a context pacing problem.

This post locates where enterprise analytics AI deployments actually slow down, names why context is the gating factor, and shows how a certified context substrate compresses time to value from quarters to weeks. For the full value-realization framework behind this argument, start with Analytics AI at Enterprise Scale: Why the Value Gap Is a Context Gap; the piece below zooms in on velocity.

The Pacing Misdiagnoses

Walk an enterprise analytics AI program timeline and the same bottlenecks keep reappearing in the Gantt chart: data engineering capacity, metric reconciliation across business units, security review, change management, training and adoption. Each of these is a real constraint. None of them is the one that stretches a six-week pilot into a nine-month rollout.

Gartner reports that 57% of I&O leaders who reported at least one AI failure said initiatives failed because they expected too much, too fast. The instinctive reading is an ambition problem. The accurate reading is a sequencing problem. "Too fast" means the program tried to deploy production analytics AI before the analytics estate was ready to support it. The pilot worked on curated data with a curated definition set. Production has neither.

Why Context Is the Gating Factor

The specific slowdown pattern is this. Every new question the AI is asked in production requires an analyst to confirm the underlying definitions, reconcile conflicting metrics across source systems, and approve the wording the AI will return. This work is invisible on the engineering roadmap, which is why it rarely gets planned for. It is also the single largest consumer of time between pilot and scaled rollout.

Here is a concrete example. A finance leader asks for churn by cohort for the last four quarters. The demo-era answer comes back in seconds. The production-era answer requires an analyst to confirm which churn definition is in use across Finance, Product, and Customer Success, which cohort boundary applies (signup month, go-live month, first-invoice month), and which four quarters count given the current fiscal calendar. Three analysts, six Slack threads, one week later, the AI returns the number. Velocity did not collapse because of the model. It collapsed because the estate carries three definitions and the AI cannot resolve that on its own.

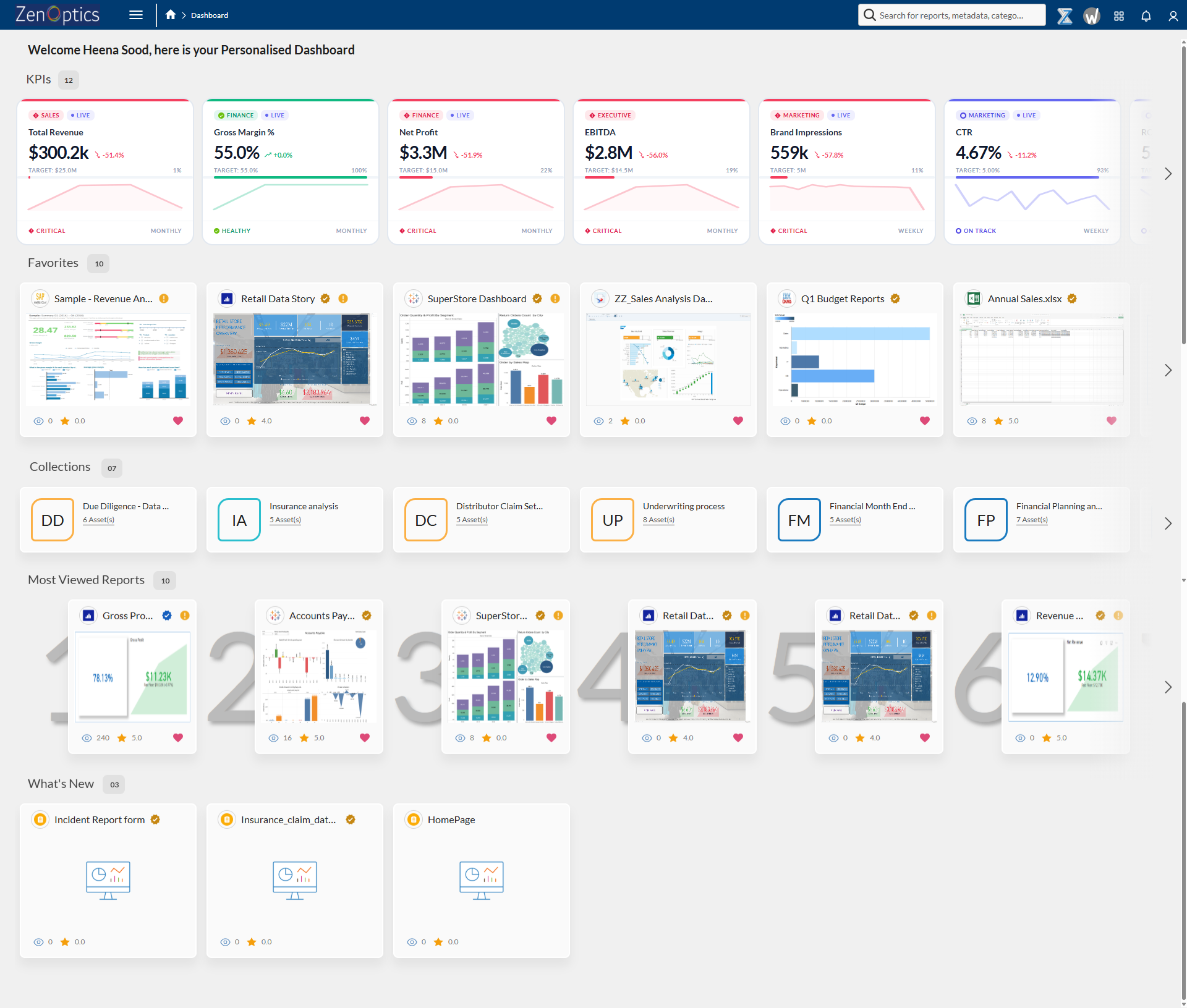

The substrate that resolves this sits inside The Decision Intelligence Platform. ZenOptics addresses this by establishing a certified analytics foundation and a shared context layer that AI can rely on. Nexus is the Analytics Context Layer. It establishes certified business definitions, semantic relationships, and trusted metrics once, and it grounds every analytics AI answer in those definitions automatically. The analyst-hours that used to gate every production question become a one-time setup cost, not a per-question tax.

The Time-to-Value Blueprint for Enterprise Analytics AI

Compressing enterprise analytics AI time to value requires certifying the estate and the context before the AI is turned loose on production questions. In ZenOptics terms, that work is done by Atlas and Nexus, operating together under The Decision Intelligence Platform.

Certify the Estate With Atlas

Atlas is the analytics system of record for the enterprise. It inventories the BI estate across Tableau, Power BI, Looker, ThoughtSpot, and any combination of them, and it surfaces which reports are trusted, which are duplicates, and which the business should retire. Without this layer, analytics AI lands on a chaotic estate and inherits its chaos. Every production question then invites a debate about which dashboard the AI drew from. With Atlas in place, the estate is certified before the AI is asked a question.

Ground the AI in Certified Context With Nexus

Nexus captures the business definitions, certified metrics, and semantic relationships that the AI needs to answer production questions without per-question analyst review. When a leader asks about churn, Nexus answers with the certified definition and the lineage behind it. The definition work that used to happen per question is done once, centrally, and reused across every downstream analytics AI surface.

Operationalize With ZIVA

ZIVA, is the conversational surface the business actually sees. ZIVA operationalizes the Atlas-certified estate and the Nexus-certified context so that a business leader's question returns a governed answer grounded in certified definitions. The user experience is a question and an answer. The substrate doing the work is invisible and fast.

Organizations implementing ZenOptics typically see analytics AI deployments stand up two to three times faster than programs that skip the context layer. The compression is not coming from a better model. It is coming from work that was going to happen anyway, done once and done centrally, instead of per question and in parallel across teams.

What Fast Analytics AI Time to Value Looks Like

A fast enterprise analytics AI deployment has recognizable signatures. The first business unit stands up in weeks, not quarters, because the Atlas-certified estate and the Nexus-certified context layer are in place before the AI is switched on. The second business unit inherits most of the context from the first, so its timeline is shorter than the first, not the same. Questions that used to pause for analyst validation come back in seconds, because the definitions they depend on are already certified. The CFO is signing off on AI-generated numbers inside the first rollout, not waiting until the third or fourth.

Gartner projects that through 2026, organizations without an AI-ready data practice will see over 60% of AI projects fail to deliver on business SLAs and be abandoned. The programs that compress time to value are the ones that treat the context substrate as pre-work, not post-work. Certify the estate. Certify the meaning. Then turn the AI on.

Where to Go Next

If you have not read the overview yet, start with Analytics AI at Enterprise Scale: Why the Value Gap Is a Context Gap for the full value-realization framework behind this argument.

If autonomous analytics AI agents are on your roadmap and governance is the next unsolved problem, read Governing Autonomous Analytics AI at Enterprise Scale: Beyond Cybersecurity.

FAQ: Analytics AI Time to Value at Enterprise Scale

What is analytics AI time to value?

Analytics AI time to value is the elapsed time from the first funded analytics AI pilot to a production deployment the business measurably trusts. It spans demo, pilot, first business unit rollout, and the point at which leadership routinely acts on AI-generated analytics answers. In 2026 it has become the key measurement for enterprise analytics AI program health.

Why does enterprise analytics AI deployment take so long?

Most enterprise analytics AI deployment time is consumed by per-question context work: reconciling conflicting business definitions, validating metric lineage, approving the wording the AI will use. This work does not appear on the engineering roadmap, so it is often miscounted as training, tuning, or change management. The actual bottleneck is the absence of a certified Analytics Context Layer.

How do you reduce analytics AI time to value?

The fastest way to reduce analytics AI time to value is to certify the analytics estate and the business context before production rollout, rather than in parallel with it. ZenOptics does this with Atlas as the certified analytics system of record and Nexus as the Analytics Context Layer. Organizations implementing ZenOptics typically see analytics AI deployments stand up two to three times faster than programs that skip the context substrate.

Why do analytics AI projects fail?

Analytics AI projects most often fail because the analytics estate underneath the AI is not ready. Definitions conflict across business units, metrics are not certified, and governance is reactive. Gartner found that 57% of I&O leaders who reported at least one AI failure said initiatives failed because they expected too much, too fast. The honest reading is that the estate was not prepared to support production AI, and the AI made the gap visible.

Is analytics AI time to value different from general AI time to value?

They are measurably different. General AI time to value is often model-bound, which means it is gated by training, tuning, or integration with source systems. Analytics AI time to value is context-bound, which means it is gated by business definitions, metric certification, and the trustworthiness of the underlying analytics estate. A faster model does not close the analytics AI time-to-value gap. A certified context substrate does.

Published April 28, 2026