Enterprise organizations are spending more on AI than at any point in history. The models are more capable, the integrations are faster, and the vendor ecosystem is more mature. And yet the pattern from the past three years is consistent: AI investments deployed against analytics produce outputs that teams find plausible, sometimes useful, and rarely trusted enough to act on without manual verification. The problem is not the AI model. The problem is the layer beneath it that most AI deployments are missing entirely.

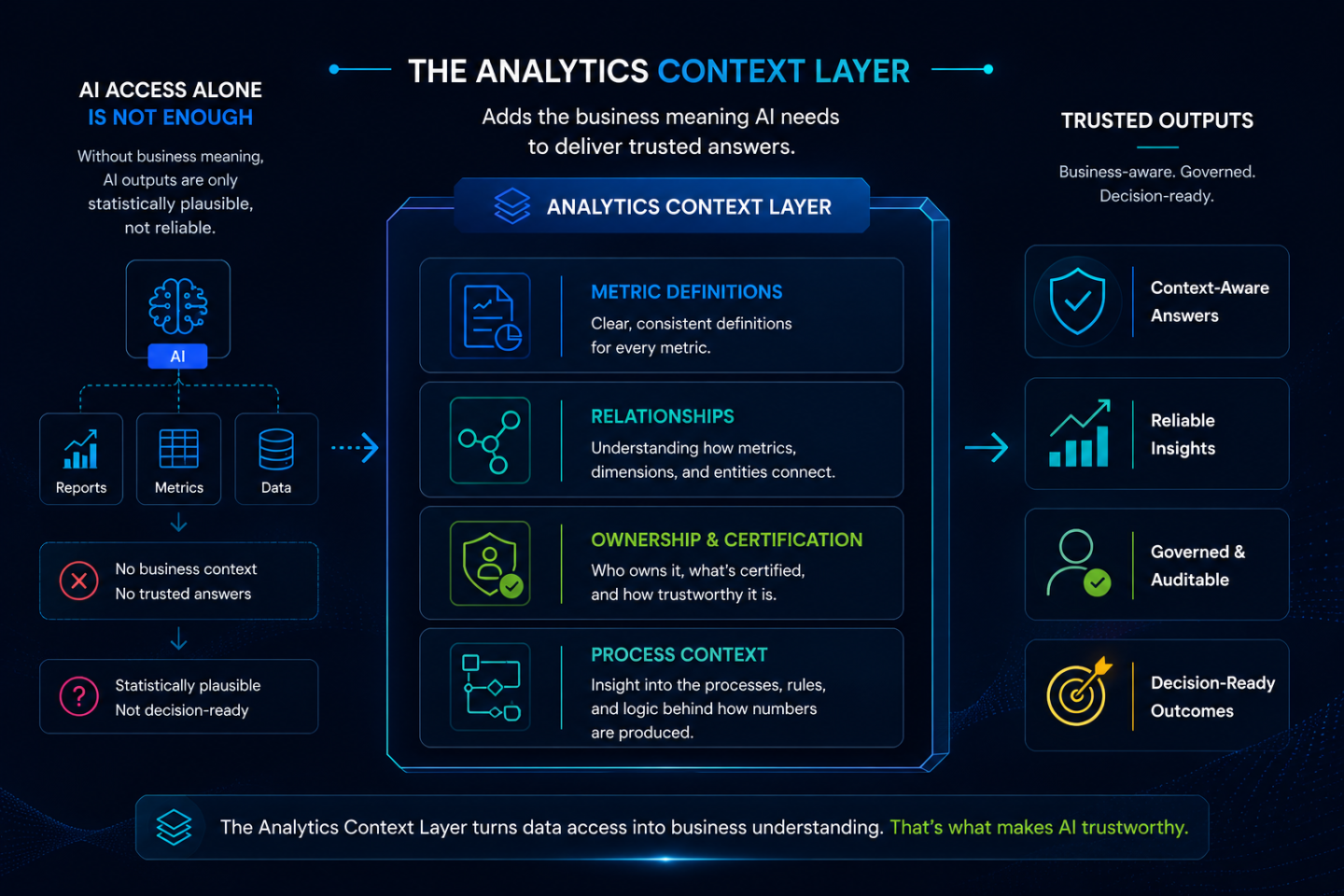

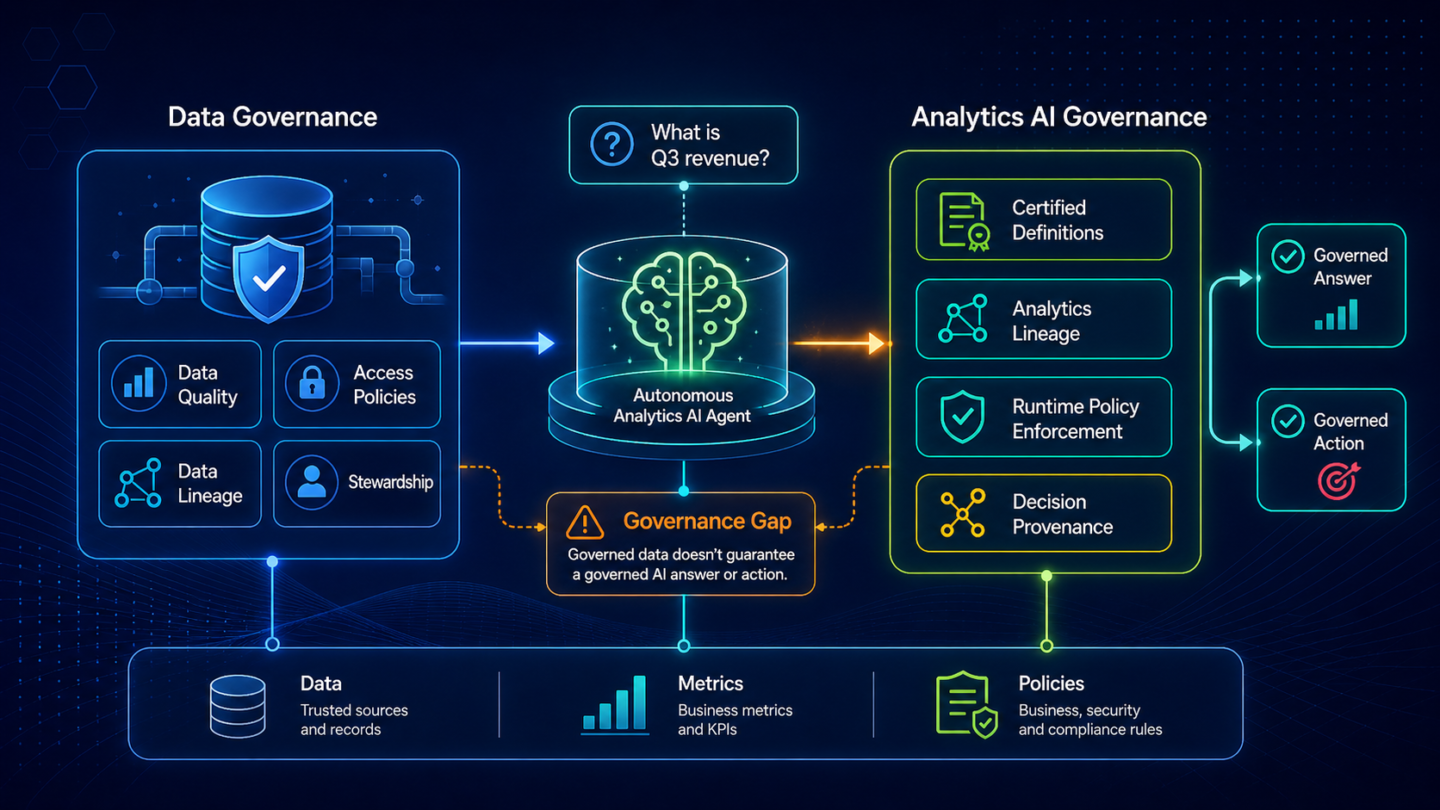

The analytics context layer is the structured representation of what your business metrics mean: how they are defined, how they relate to each other, who owns their definitions, and what process governs their use. Without it, AI systems fill the gaps with statistical inference. With it, AI systems produce answers that are grounded in the organization’s actual business logic. The gap between those two things is why the context gap is the most common reason analytics AI investments stall in enterprise environments.

The assumption behind most enterprise AI deployments is that access is the bottleneck. If AI tools can connect to the data, they can use the data. This assumption underlies most data integration projects, most BI modernization programs, and most AI readiness assessments. It is also the reason so many of those investments produce less than expected.

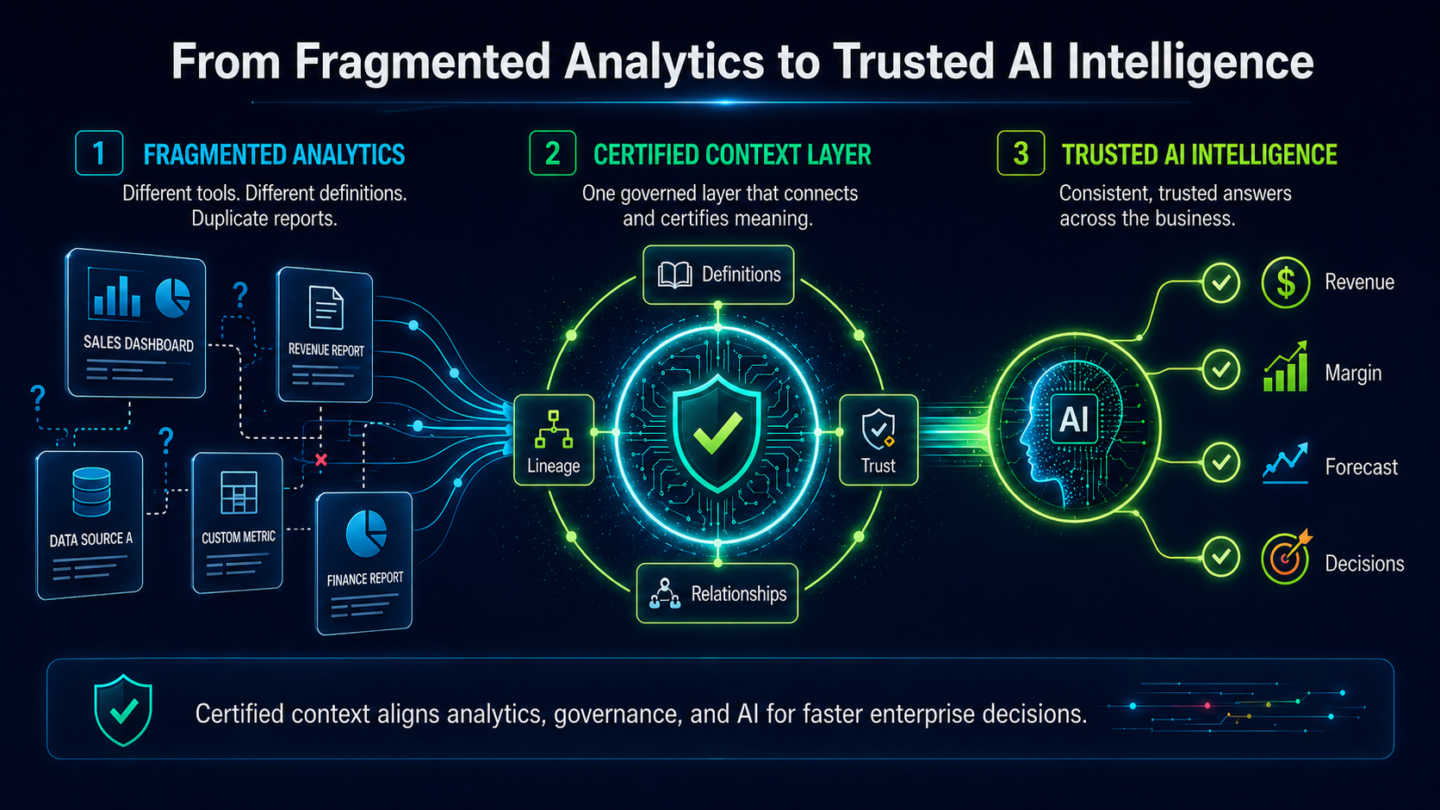

Access and understanding are different things. An AI system given access to an enterprise’s BI environment can read every report, query every metric, and return answers to any question it is asked. What it cannot do, without a context layer, is understand what those metrics mean in this organization. It cannot know that “revenue” in the commercial team’s reporting excludes the adjustments the finance team applies. It cannot know that two reports with different names are measuring the same thing, or that two metrics with the same name are measuring different things depending on which business unit produced them. It cannot know which version of a KPI is authoritative for which reporting purpose, or that a metric’s definition was revised six months ago and only applies to the current period.

These are not edge cases. They are the structural realities of enterprise analytics environments that have accumulated over years of BI investment across multiple tools, teams, and reporting cycles. AI systems operating without a context layer encounter all of it indiscriminately. They produce statistically reasonable answers and contextually wrong ones at unpredictable intervals. That unpredictability is what prevents the outputs from being trusted.

An analytics context layer is the machine-readable, structured representation of the business meaning embedded in an enterprise’s analytics estate. It captures four categories of information that AI systems need to produce reliable, trusted outputs.

The first is metric definitions: what each KPI, metric, and measure means in precise business terms, including how it is calculated, what its boundaries are, and how it differs across business units or reporting contexts where the same term carries different meanings.

The second is relationships: how metrics relate to each other, which KPIs roll up into which business outcomes, which reports draw on which underlying metrics, and what the lineage is between raw data and the numbers that appear in executive dashboards.

The third is ownership and certification: which analytics assets have been designated as authoritative, who is accountable for their definitions, when they were last reviewed, and whether they are in current use or carry historical-only status.

The fourth is process context: which business processes each metric informs, what the decision workflow looks like around that metric, and what governance rules apply to AI systems that operate within that workflow.

Together, these four components give AI systems the business intelligence they need to move from statistically probable answers to contextually correct ones. They are the difference between an AI that knows your organization has a “net revenue” metric and an AI that knows what “net revenue” means in the context of your commercial team’s Q3 reporting cycle.

Semantic layers, as developed in the BI context, address the translation between raw data schema and business-readable metric names. They sit between the data warehouse and the BI tool, mapping technical field names to business-language labels. They are valuable infrastructure.

They are not the same as an analytics context layer. A semantic layer handles the naming and basic calculation logic for metrics. An analytics context layer handles the organizational meaning behind those metrics: ownership, process context, certification status, cross-metric relationships, and the governance rules that determine how AI systems should use them. A semantic layer tells an AI system what “net revenue” is. An analytics context layer tells an AI system what “net revenue” means in this organization, for this reporting purpose, under this governance policy, and with this certification status.

The distinction matters for AI deployment because AI systems operating at the analytics layer need the organizational meaning, not just the technical definition. A semantic layer is a prerequisite for structured AI access. An analytics context layer is what makes that access produce trusted outputs.

Data catalogs address the raw data and infrastructure layer: what datasets exist, where they live, what their technical schema is, and what the data quality is at the record level. Data catalogs are data engineering infrastructure.

An analytics context layer addresses the business intelligence layer: what metrics and dashboards mean, who owns them, how they relate to business decisions, and whether AI systems can trust them as authoritative sources for the decision they are supporting. These are different layers, different audiences, and different functions.

ZenOptics is explicit on this point: it is purpose-built for dashboards, metrics, and reports (the layer where business decisions actually happen) and is not a data catalog. A data catalog governs raw data so analysts can find and understand datasets. An analytics context layer governs certified analytics so AI systems can use them correctly and so the decisions AI influences can be traced, audited, and explained.

Organizations that have invested in data catalog programs sometimes assume the catalog addresses their AI context needs. It does not. The catalog tells data engineers what data exists. The analytics context layer tells AI systems what certified analytics mean and how they can be acted on.

With a properly built analytics context layer in place, three capabilities become available that are not feasible without it.

The first is AI accuracy at business scale. AI tools connected to a context layer produce answers grounded in the organization’s approved metric definitions, not statistical inferences. When an executive asks the AI system about revenue performance, the system draws on the certified version of the metric (the one the CFO has approved for quarterly reporting) rather than whichever report happens to be most statistically similar to the query. The difference between those two answers is the difference between AI that confirms what the team already knows and AI that produces numbers no one can reconcile.

The second is faster deployment of new AI use cases. The largest hidden cost in enterprise AI deployment is the manual semantic build work that precedes each new use case: defining what metrics mean for the AI, mapping relationships, and constructing the business context the AI needs to operate. Organizations implementing ZenOptics typically see AI deployment timelines compress two to three times once the automated context layer is in place, because the context layer is derived from existing BI metadata rather than built from scratch for each new AI application.

The third is the ability to govern AI execution. A governed analytics context layer is the foundation for decision traceability: every AI-driven action can be traced back to the certified metric that informed it, through the business context that grounded the recommendation, to the outcome it produced. Without the context layer, that trace does not exist. Every AI action is a black box. For the architecture that connects the context layer to governed agent execution, Agentic Analytics in the Enterprise: From Pilot to Production covers the full three-layer model.

The traditional approach to building an analytics context layer is manual. A team of analysts or data engineers inventories the existing BI estate, maps metric definitions, documents relationships, and constructs the semantic and business context from scratch. This work takes months before the first AI use case can be deployed against it. When the business evolves, when definitions change, metrics are added, or BI tools are replaced, the manual build work begins again.

Automated context generation is a different approach. Rather than constructing the context layer from scratch, it derives the semantic structure, metric definitions, relationships, and business logic that already exist within the organization’s BI metadata. The context layer is built from what is already there: the headers, schemas, query patterns, ownership records, and certifications that the BI estate already contains. The result is a context layer that is ready to serve AI systems without months of manual construction, and that updates automatically as the estate evolves rather than decaying between rebuild cycles.

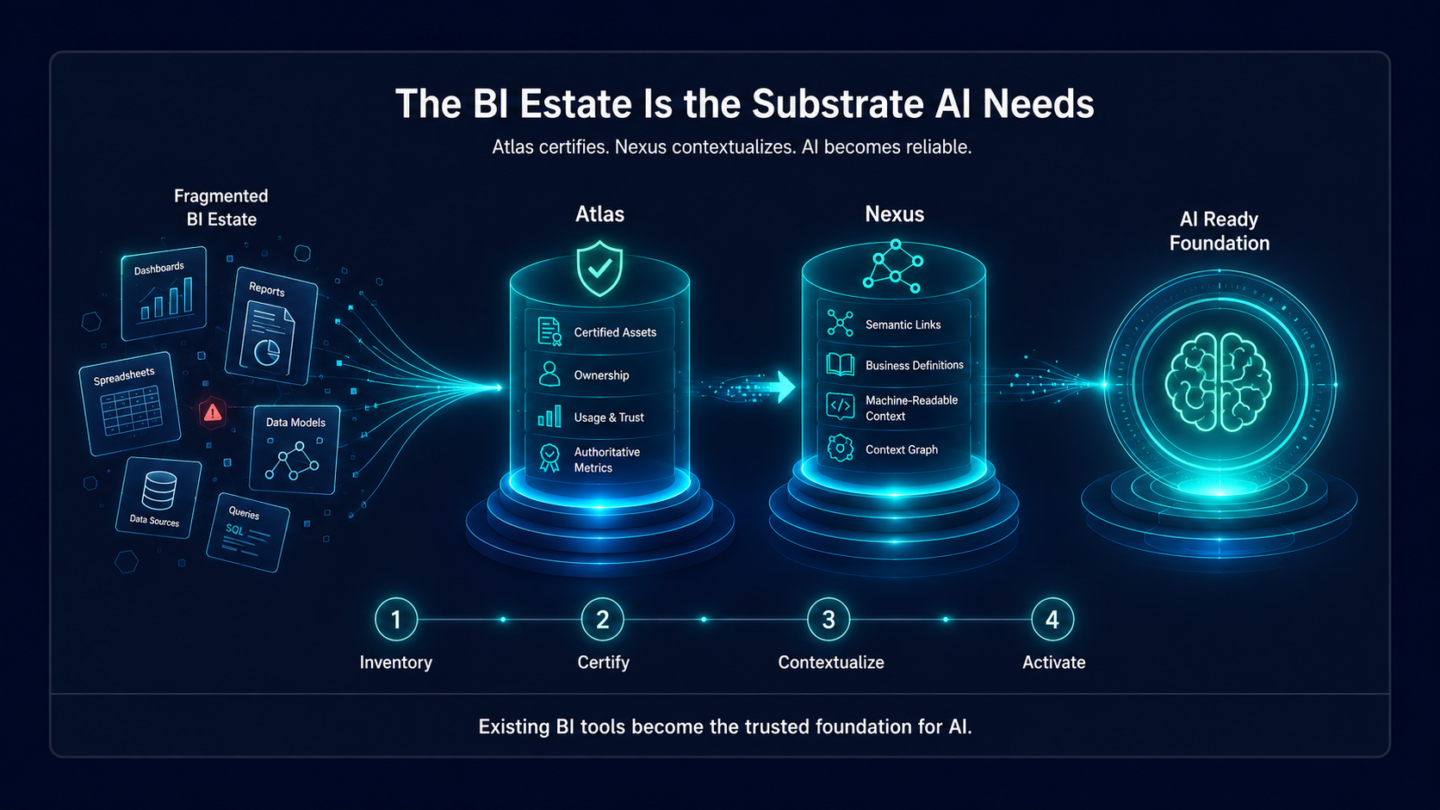

Nexus, ZenOptics’s AI Context Layer for Analytics, is built on this principle. Nexus onboards the metadata from the organization’s BI tools through Atlas, automatically derives the context layer from what exists, and makes it machine-readable for any AI tool in the organization’s stack. The context layer is maintained continuously as the estate changes, so new AI use cases can be deployed against the same trusted foundation rather than requiring a new manual build each time.

Organizations implementing ZenOptics typically see analytics discovery improve 20 to 40 percent once the context layer is in place, because every metric is surfaced with its certified definition, ownership, and relationship context rather than requiring analysts to reconstruct that information from institutional knowledge each time.

What is an analytics context layer? An analytics context layer is the machine-readable, structured representation of what an enterprise’s business metrics mean. It captures metric definitions, KPI relationships, ownership and certification status, and the process context that governs how analytics assets are used in business decisions. It is the layer between AI systems and BI data that enables AI to produce outputs grounded in the organization’s actual business logic rather than statistical inference.

How is an analytics context layer different from a semantic layer? A semantic layer maps raw data fields to business-readable metric names and handles basic calculation logic. An analytics context layer addresses a broader set of information: who owns each metric’s definition, what its certification status is, how it relates to other metrics in a governed hierarchy, and what business process context applies when AI systems use it. A semantic layer is necessary infrastructure for structured AI access; an analytics context layer is what makes that access produce contextually correct, trusted outputs. The two layers are complementary but address different problems.

Why do AI tools fail without an analytics context layer? Without a context layer, AI systems fill the gaps in their understanding with statistical inference. They can observe that two metrics are related based on how they appear together in queries and reports, but they cannot know which version of a metric is authoritative, what the governance rules around it are, or how its definition differs across business units. The result is answers that are statistically plausible but contextually wrong in ways that are difficult to predict and hard to diagnose, a pattern that erodes stakeholder trust over time.

Can you build an analytics context layer manually? You can, and many organizations have attempted it. Manual construction of an analytics context layer involves inventorying BI assets, documenting metric definitions, mapping relationships, and building the semantic structure by hand. The challenges are timeline (months of work before the first AI use case can deploy against it), maintenance (the context layer decays as the business evolves unless it is continuously updated), and coverage (manual builds typically cover a fraction of the full BI estate). Automated context generation (deriving the context layer from existing BI metadata rather than constructing it from scratch) addresses all three challenges.

How does the analytics context layer connect to AI agent governance? The analytics context layer is the foundation for governed AI execution. When AI agents operate within a context layer, every action they take can be traced back to the certified metric that informed it, through the approved business definitions that grounded the recommendation. Without that context layer, the trace does not exist, and agent actions are black boxes. The context layer is what makes decision traceability possible, which is the mechanism by which governed AI execution satisfies compliance, risk, and audit requirements.

How long does it take to build an analytics context layer with ZenOptics? With automated context generation through Nexus, the initial context layer is derived from existing BI metadata rather than built from scratch, significantly compressing the timeline compared to manual approaches. Organizations implementing ZenOptics typically see AI deployment timelines move two to three times faster than manual semantic build approaches, because the context layer is available for AI use as soon as the metadata onboarding is complete rather than after months of manual construction. The context layer also updates continuously as the BI estate evolves, rather than requiring periodic rebuild cycles.

Enterprise analytics teams are no longer debating whether to adopt AI agents. Gartner estimates that 40% of enterprise applications will include task-specific AI agents by 2026. The adoption curve is steep, the pressure from leadership is real, and the vendors are ready. What most enterprise architectures are not ready for is the question that follows deployment: when an AI agent influences a material business decision, what is the auditable record of how that decision was made?

In regulated industries, that question is not rhetorical. It is a compliance requirement. And for most agentic analytics architectures currently in production or moving toward it, the honest answer is: there is no auditable record. The agent acted, the decision was made, and the reasoning path cannot be reconstructed.

The governance deferral pattern is familiar. A team deploys an agentic analytics workflow, governance is scoped as a future phase, and the deployment moves forward on the strength of the demo results. For a period, this works. The pilot performs well, the outputs look credible, and the governance gap is invisible.

The gap surfaces in one of two ways. The first is operational: an AI-influenced decision produces an outcome that needs to be reviewed, and the team discovers that the agent’s reasoning cannot be traced. Which metric informed the recommendation? What business context shaped the analysis? What process boundary, if any, did the agent operate within? If those records were not captured at the time of execution, they cannot be retrieved.

The second is regulatory: an auditor, a regulator, or a board risk committee asks for documentation of how an AI-assisted decision was made. The absence of decision traceability is not a documentation gap that can be closed after the fact. It is evidence that the decision process itself was not governed. In financial services, insurance, and healthcare, that exposure is not recoverable through retroactive documentation.

Gartner projects that by 2030, 50% of AI agent deployment failures will be due to insufficient governance platform runtime enforcement. The organizations behind those failures are not making a considered trade-off. They are deferring governance until the cost of deferral is higher than the cost of rebuilding from scratch.

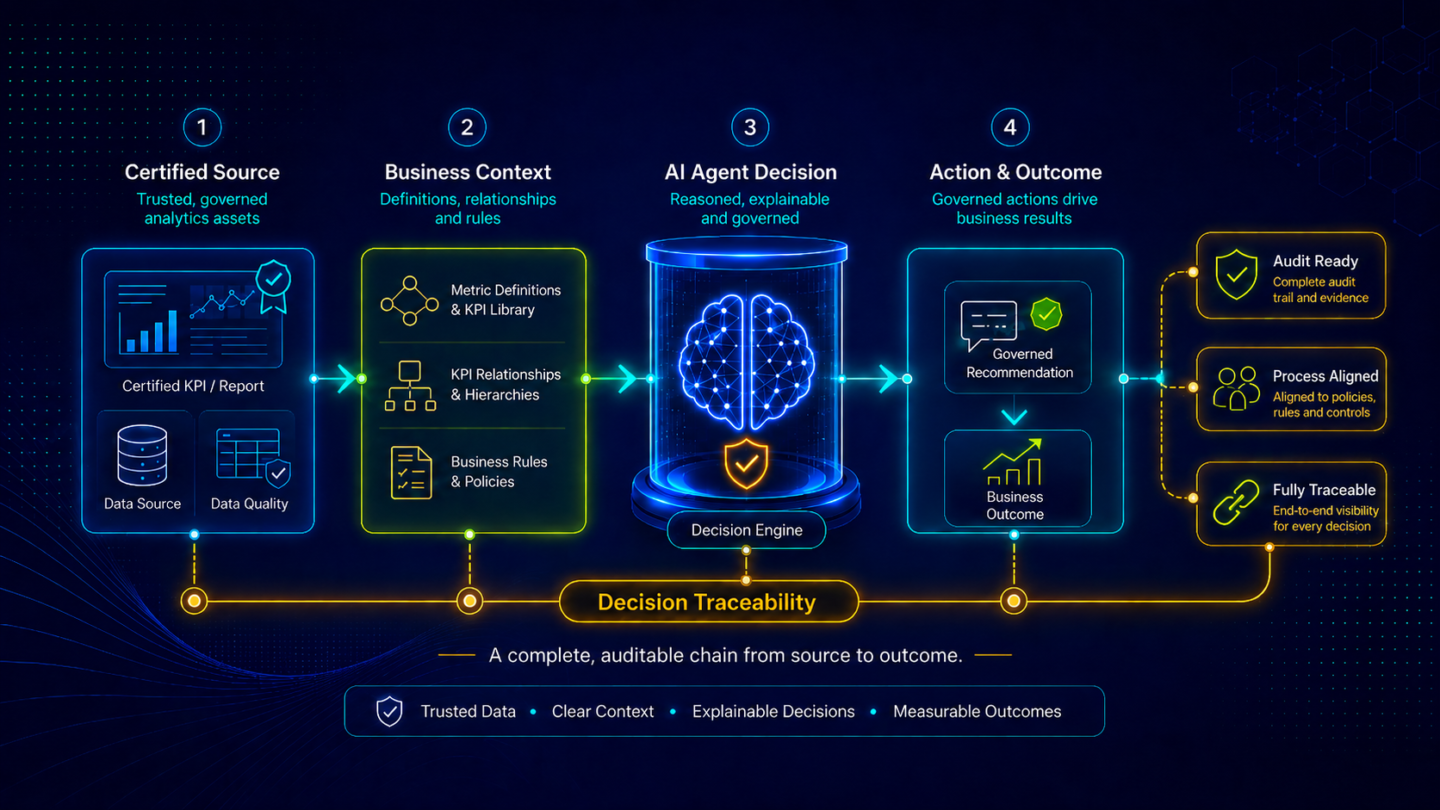

Decision traceability is a specific technical and governance capability. It is not an audit log that records what queries were run, and it is not a general AI safety framework that monitors model outputs. In the enterprise analytics context, it is the complete, end-to-end capture of the reasoning chain behind an AI-influenced decision.

That chain has four components. First, the certified source: which analytics asset, with which certification status and ownership record, provided the data that grounded the agent’s analysis. Second, the business context: which metric definitions, KPI relationships, and process rules the agent was operating within when it generated its recommendation. Third, the recommendation itself: what the agent surfaced, in what form, and under what parameters. Fourth, the action and outcome: what the agent triggered or recommended as a next step, what process that action was aligned to, and what business outcome it produced.

A trace that captures only some of these components is not decision traceability. In regulated environments, partial traces are not treated as partial compliance. They are treated as no compliance. The audit standard is completeness. An AI agent operating in enterprise analytics must carry a full chain of custody from the certified metric that triggered its analysis to the outcome its action produced.

This is a more demanding standard than most organizations applying general AI governance frameworks are currently meeting. General AI governance addresses questions like: is the model performing within acceptable parameters, is the output biased, and is the system secure? Those are required conditions. The analytics governance requirement goes further: is every action traceable to a certified business metric, is the recommendation grounded in the organization’s own approved context, and is the decision lineage complete enough to satisfy an audit?

The governance requirement is not a future state. The regulatory frameworks driving it are already in effect or in active enforcement planning.

The EU AI Act, which entered into force in stages from 2024 through 2027, establishes a risk-based classification for AI systems. Analytics-driven decisions in regulated industries (credit risk, insurance underwriting, and certain operational decision workflows) may fall within the high-risk classification under the Act’s Annex III. High-risk AI systems require documented governance, human oversight mechanisms, and full traceability of how the system reached its outputs. Organizations deploying AI agents in these contexts without a governed execution layer face compliance exposure under the Act’s requirements.

In financial services, the SEC and OCC have both issued guidance on AI governance for regulated entities. The common thread across regulatory frameworks: accountability for AI-influenced decisions cannot be waived by pointing to the model as a black box. The organization is accountable for the decision, which means the organization must be able to trace how that decision was made.

Enterprise risk frameworks are converging on the same requirement independently of specific regulations. Chief Risk Officers and enterprise audit functions are asking analytics and data leaders to demonstrate that AI agents operate within defined boundaries, that their actions are aligned to approved business processes, and that decision outputs are attributable. In organizations where those records do not exist, AI agent deployments are being paused or restricted pending governance architecture remediation.

General AI governance frameworks address the behavior of AI models across domains: safety, bias, output reliability, and access controls. These are necessary capabilities and most mature enterprises have some version of them in place.

Analytics agent governance addresses a more specific requirement: the governance of AI agents that operate within the enterprise analytics and business intelligence environment, where every output is tied to certified business metrics, regulated reporting cycles, and decisions made by senior leaders who are accountable for them.

The distinction matters because general AI governance does not provide what analytics agent governance requires. A model monitoring framework that confirms the agent is operating within statistical norms does not confirm that the agent’s recommendation was grounded in the certified version of a financial metric rather than a shadow version built by a regional team. A bias monitoring framework that confirms output fairness does not confirm that the agent’s action was aligned with the approved business process that governs that decision type. An access control framework that confirms only authorized users triggered the agent does not capture whether the agent’s KPI references were authoritative.

For a detailed treatment of the distinction between general AI governance and analytics governance, Governing Autonomous Analytics AI at Enterprise Scale maps the gap that most enterprise governance programs have not yet closed.

Building the governed AI execution environment for enterprise analytics is a three-layer project, and the layers must be built in sequence.

The foundation is a certified analytics estate. Every analytics asset that an AI agent might query must have a known certification status, an active owner, and a clear designation of whether it is an authoritative source for the relevant metric. An agent operating on an ungoverned estate will ground its analysis in whatever it finds, certified and uncertified assets alike. The decision lineage that follows is not auditable because the data foundation is not itself certified. This layer must be complete before governance of agent actions can be meaningful.

The second layer is the analytics context layer. Governed execution requires that every agent action be grounded in the organization’s approved business definitions, not in the agent’s statistical inferences. The context layer makes those definitions machine-readable: what each metric means, how it relates to other metrics, which definitions are authoritative for which reporting contexts, and what the approved process boundaries are for decisions in each domain. Without this layer, the agent’s actions cannot be traced to business-approved logic. They can only be traced to what the model computed.

The third layer is the governed execution environment itself. This is where decision traceability is operationalized: every AI-driven action is mapped to the certified metric that informed it, aligned to the approved business process that governs it, and recorded with the full decision lineage from input to recommendation to outcome. The governed execution layer also monitors agent behavior in real time, enforcing process boundaries and flagging actions that fall outside defined parameters before they produce outcomes that require remediation.

For organizations working through the broader AI readiness architecture, the AI-ready analytics enterprise blueprint covers how the three layers connect at enterprise scale.

Maestro, ZenOptics’s Execution and Agent Control Layer, is built for this requirement. Maestro maps every AI-driven decision to the trusted analytics that informed it, enforces the process boundaries that govern agent actions, monitors agent behavior continuously, and captures full decision provenance at every step.

The decision provenance Maestro produces is not a general audit log. It is analytics-specific: every action is tied to the certified KPI that triggered the analysis, through the business context that grounded the recommendation, to the approved process that governed the action, to the outcome it produced. That chain is the auditable record that compliance, risk, and executive stakeholders require, and that regulators increasingly expect.

Maestro does not operate in isolation. It draws on Atlas for the certified analytics estate and on Nexus for the machine-readable context layer. The three layers together produce the governed execution environment that makes AI agent deployment viable in regulated enterprise environments.

For organizations that have closed the foundational gaps and are ready to understand what the full production-ready architecture looks like, Agentic Analytics in the Enterprise: From Pilot to Production maps the complete sequence.

What is AI agent governance for enterprise analytics? AI agent governance for enterprise analytics is the set of controls, processes, and technical infrastructure that ensures AI agents operating in analytics environments act within approved boundaries, grounded in certified business data, with every action fully auditable. It is distinct from general AI governance because it addresses the specific requirements of analytics-driven decisions: certified metric sources, approved business process alignment, and complete decision lineage from KPI to recommendation to outcome.

What is decision traceability and why does it matter for enterprise AI? Decision traceability is the complete, end-to-end capture of the reasoning chain behind an AI-influenced decision. For enterprise analytics, that chain runs from the certified metric that triggered the analysis, through the business context that grounded the recommendation, through the process boundaries the agent operated within, to the action it triggered and the outcome it produced. Decision traceability matters because it is the auditable record that compliance and risk stakeholders require, and because partial traces are treated as no trace in most regulated environments.

How does the EU AI Act affect enterprise analytics deployments? The EU AI Act establishes a risk-based classification for AI systems. Analytics-driven decisions in regulated industries, particularly financial services and healthcare, may qualify as high-risk AI applications under the Act’s framework. High-risk AI systems require documented governance mechanisms, human oversight, and full traceability of how the system reached its outputs. Organizations deploying AI agents in these contexts without a governed execution layer face compliance exposure under the Act’s requirements as they come into full force.

What distinguishes analytics agent governance from general AI governance? General AI governance addresses model behavior across domains: output reliability, bias monitoring, safety controls, and access management. Analytics agent governance addresses the additional requirements specific to the business intelligence context: every AI action must be grounded in certified business metrics, aligned to approved business processes, and recorded with the complete decision lineage that analytics-specific compliance requires. General AI governance is a required condition for analytics agent governance, but it does not satisfy the analytics-specific traceability standard on its own.

Can AI agent governance be added after an agent is already deployed? Governance can be retrofitted, but at significant cost and risk. The core challenge is decision lineage: if the trace from input to recommendation to action was not captured from the start, it cannot be reconstructed for decisions that have already been made. Organizations that add governance after deployment can protect future decisions, but the period of ungoverned operation remains unauditable. For organizations in regulated industries, that gap represents a period of compliance exposure that may require disclosure. Building governance in from the start is always less expensive than retrofitting it.

What does a governed AI agent execution environment look like in practice? A governed AI agent execution environment has three visible characteristics. First, every agent action is traceable to the certified analytics asset that informed it: the agent cannot operate on uncertified or unclaimed data. Second, every recommendation is grounded in machine-readable business definitions that reflect the organization’s approved KPI logic, not statistical inference. Third, every action the agent takes is aligned to an approved business process, monitored against defined behavioral boundaries, and recorded with the full decision lineage from source metric to outcome. In ZenOptics, Maestro is the execution layer that operationalizes all three.

The enterprise analytics industry has spent two years watching agentic AI demos succeed. Every major BI vendor has one. Most enterprises have run one. The story that does not make the conference keynote is what happens next: why so few of those demos become production deployments, and why the organizations that shut them down rarely understand the actual cause of failure. Gartner predicts that more than 40% of agentic AI projects will be canceled by end of 2027. Most of those cancellations will not happen because the technology failed. They will happen because the enterprise environment underneath it was not ready.

An agentic analytics demo proves one thing: given a clean dataset and a well-scoped prompt, the AI model can return a structured output. That is a real capability, but it is not what production deployment requires.

A production deployment has to do something considerably harder. It has to produce outputs that are consistently trusted by the people who use them to make real decisions: not once, with a curated dataset, in a controlled environment, but reliably, across the full complexity of an enterprise analytics estate, against questions that were not in the demo script, grounded in business context the team was not aware of during the pilot, and with every action auditable by risk and compliance stakeholders who were not in the room.

The gap between those two things is where most pilots stall. It is not usually a model problem, a data pipeline problem, or an integration problem. It is three structural gaps in the enterprise environment that a pilot does not surface and a production deployment cannot survive without.

A pilot environment is curated. Before the demo, the team selects a set of certified reports, defines which KPIs are in scope, and builds the pilot on that controlled subset. The agent performs well because it has been given trustworthy inputs.

Production is different. In production, the agent queries the full analytics estate, and most enterprise analytics estates are not curated. Organizations implementing ZenOptics typically find that 30 to 40 percent of their analytics estate consists of duplicate or conflicting reports. That means for every clean, certified version of a metric or dashboard, there are likely one or more shadow versions in the environment: built by different teams, using different calculation logic, for purposes that may have been relevant three years ago and are not today.

The agent has no visibility into any of this. It cannot see certification status. It cannot see that a report has no active owner. It cannot distinguish the revenue figure the CFO uses for quarterly reporting from the revenue figure a regional team built for a campaign analysis that never reconciled with finance. It queries what it finds. All of it looks equivalent to the agent.

The failure mode is predictable: the agent surfaces an answer that does not match the figure the stakeholder already knows to be correct. The stakeholder does not conclude that the agent found an uncertified report. The stakeholder concludes that AI cannot be trusted. That perception is sticky. Recovering stakeholder confidence after it is lost is significantly harder than establishing it in the first place.

The Hidden Cost of Analytics Sprawl examines how estate debt accumulates and why it becomes the primary blocker to AI adoption, not because AI doesn’t work, but because the estate it runs on was never designed with AI access in mind.

The second gap is the one that vendor presentations routinely treat as already solved: the AI understands what the data means.

It does not. Not in the way enterprise analytics requires.

Without a machine-readable analytics context layer to ground it in business meaning, what an AI agent understands is statistical relationships. It can infer that “revenue” and “net revenue” are related. It can observe that they appear near the same dashboards and are referenced together in similar queries. It can produce a coherent response that treats them as related concepts. What it cannot do is apply the business definition the CFO has approved for quarterly reporting. It cannot apply the rule that net revenue for the commercial team excludes contra-revenue items that the finance team includes. It cannot know that a particular metric rolls up to a specific KPI that feeds a specific forecast model, or that the definition of that KPI was revised eighteen months ago and only applies to transactions after a certain date.

That information exists in the organization. It lives in governance documents that were written for humans, in the institutional knowledge of senior analysts who have been there long enough to remember the revision history, and in spreadsheets that function as unofficial business glossaries because no one ever built the formal version. None of it is machine-readable. The agent fills the gaps with statistical inference. The output is coherent. It sounds right. It is wrong in ways that only someone with deep organizational context would catch, and it is wrong inconsistently, which makes the pattern hard to diagnose.

The downstream effect is not a single incorrect answer that gets corrected. It is a pattern of answers that are sometimes right, sometimes wrong in ways that are hard to predict, and that erode trust systematically over time. Stakeholders learn that they cannot rely on AI-generated analytics without manually verifying against their own knowledge. At that point, the agent has added work, not removed it.

Automated context generation (deriving the business definitions, KPI relationships, and semantic structure that already exist within the organization’s BI metadata and making that machine-readable) removes this gap. Organizations implementing ZenOptics typically see AI deployment timelines compress two to three times once that context layer is in place, because the months of manual semantic build work that previously preceded any AI deployment are replaced by automated derivation from existing BI metadata.

The third gap surfaces last. In a demo, it is invisible. In a regulated enterprise, it is the one that makes the deployment unviable.

A demo proves that an agent can answer questions. A production deployment in a regulated industry requires the agent to do something additional: everything it produces and every action it triggers must be auditable. What data informed this response? Which certified metric did this recommendation draw on? What business process rules governed the agent’s action? What was the outcome, and who is accountable for it?

Gartner projects that by 2030, 50 percent of AI agent deployment failures will be due to insufficient governance platform runtime enforcement. The organizations behind those failures are not running ungoverned agents by accident. They are running agents that were designed for demo environments, where governance is not a requirement, and discovered in production that governance cannot be retrofitted.

The problem with retrofitting is not technical complexity alone. It is the nature of decision lineage. If the trace from input to recommendation to action was not captured from the start, it cannot be reconstructed. When an AI-influenced decision needs to be explained to a regulator, an auditor, or a board risk committee, the absence of that trace is not a documentation gap. It is evidence that the decision process was not auditable. In financial services, insurance, healthcare, and other regulated contexts, that is not a recoverable position.

Governing agent actions from the beginning (mapping every AI-driven step to approved business processes, capturing decision lineage continuously, and monitoring agent behavior within defined boundaries) is not optional overhead. It is the infrastructure that makes the deployment possible in the first place. For a deeper treatment of what enterprise-scale AI governance requires in the analytics context, Governing Autonomous Analytics AI at Enterprise Scale covers the architecture and the regulatory pressures driving it.

The pattern across stalled agentic analytics deployments is consistent: the three gaps are not independent. An ungoverned estate almost always means an absent context layer, because organizations that have not certified their analytics assets have typically not structured the business definitions those assets carry either. A missing context layer almost always means ungoverned execution follows, because agents operating without business context take action on outputs that have not been validated against the business rules that should govern them.

Organizations that address only one gap get further in the pilot. They do not make it to production. The organizations that have completed the transition consistently report the same finding: what changed was not the AI model and not the BI tooling. What changed was the three layers of infrastructure underneath: a certified analytics estate the agent can trust, an analytics context layer that makes its outputs accurate, and a governed execution environment that makes those outputs deployable in the organization’s real operating context.

What that infrastructure looks like, and how Atlas, Nexus, and Maestro close each gap, is mapped out in Agentic Analytics in the Enterprise: From Pilot to Production.

What percentage of agentic AI projects make it to production? Industry-wide data on pilot-to-production conversion rates is still emerging, but Gartner’s projection is direct: more than 40% of agentic AI projects will be canceled by end of 2027, citing escalating costs, security concerns, and failure to demonstrate business value. The implication is that the majority of organizations currently running pilots will not complete the transition to production without addressing the structural gaps that pilots conceal.

What is the most common reason agentic analytics fails in enterprise deployments? The most common failure is not a technology failure. It is an infrastructure failure. Specifically: the analytics estate the agent queries is ungoverned, so the agent surfaces inconsistent or uncertified outputs. The AI operates without a machine-readable context layer, so its responses are statistically reasonable but contextually wrong in ways that erode stakeholder trust. And the deployment lacks a governed execution layer, so agent actions cannot be audited or attributed. Any one of these gaps is sufficient to stall a deployment; they tend to appear together.

How do you assess whether your analytics estate is ready for agentic AI? Three indicators determine readiness. First, certification coverage: what percentage of analytics assets in your environment are certified as authoritative, with a designated owner and a known last-review date? If the answer is below 60 to 70 percent, the estate is not agent-ready. Second, context availability: does your organization have machine-readable business definitions for the KPIs and metrics AI agents will query? If that context lives only in documents and institutional knowledge, the context layer is absent. Third, governance architecture: does your execution environment capture decision lineage from AI-driven actions, map those actions to approved business processes, and monitor agent behavior against defined boundaries? If not, the governed execution layer is missing.

Why does governance matter more for agentic analytics than for traditional BI? In traditional BI, a human reviews every output before acting on it. The human provides an implicit governance layer: catching errors, checking context, and taking accountability for the decision. In agentic analytics, the agent acts continuously, often across multiple steps, without a human reviewing each output. The governance that was implicit in human review has to become explicit in the platform. Without it, the agent operates as a black box, producing outputs that cannot be attributed, audited, or traced back to the certified metrics and approved processes that should govern them.

Can a failing agentic analytics deployment be fixed by switching AI models? Almost never. If the pilot produced inconsistent outputs or failed to gain stakeholder trust, the cause is almost always in the estate and context layers, not in the model. A better model querying an ungoverned estate returns better-articulated wrong answers. Switching models without addressing the underlying infrastructure gaps is the analytics equivalent of upgrading the engine in a car with no roads. The investment in the model is wasted until the foundation is in place.

How does a missing analytics context layer differ from a data quality problem? Data quality problems affect the accuracy of the underlying data records. A missing analytics context layer affects the AI’s ability to interpret what those records mean. An organization can have high-quality, accurate data and still produce wrong AI outputs if the agent does not understand what “revenue” means in the context of this business, how it differs from “net revenue,” or which version of the metric is authoritative for a given reporting context. The two problems have different symptoms and different fixes: data quality requires data engineering; context requires structured business definitions, KPI relationships, and semantic metadata that AI agents can consume.

Enterprise analytics is undergoing its most consequential shift in two decades. AI agents are moving from experimental features inside BI tools into the analytical workflows that organizations use to make real decisions: pricing, supply chain, financial close, risk assessment. Gartner estimates that 40% of enterprise applications will include task-specific AI agents by 2026, up from less than 5% in 2025. That deployment curve is steep, and the pressure on analytics and data leaders to participate in it is real.

The challenge is not running a pilot. Almost any enterprise can stand up an agentic analytics proof of concept. The challenge is getting from a controlled demo to a production deployment that produces outputs the organization actually trusts and acts on. Most pilots don’t make that crossing. Gartner predicts that more than 40% of agentic AI projects will be canceled by end of 2027, citing escalating costs, security concerns, and failure to demonstrate business value. The failure rate is not driven by technology immaturity. It is driven by three foundational gaps that most organizations enter the pilot stage without having closed.

When an agentic analytics pilot runs in a sandbox environment, it can look compelling. The agent queries data, returns structured outputs, and seems to understand the business question. The problems surface when the scope widens. Move from one business unit to three. Add a second AI tool alongside the first. Ask the agent to answer a question that involves data from multiple BI platforms. At that point, the three gaps that were invisible in a pilot become active blockers.

The first gap is an ungoverned analytics estate. The agent can only be as trustworthy as the analytics it queries. If the estate underneath contains duplicate reports, conflicting KPI definitions, uncertified dashboards, and orphaned content with no active owner, the agent will find all of it equally. It has no way to distinguish the certified version of revenue from the shadow version built by a regional analyst three years ago. Organizations implementing ZenOptics typically see 30 to 40 percent of their analytics estate comprised of duplicate or conflicting reports. An agent querying that estate will surface inconsistencies at scale, at exactly the moment when the organization is trying to demonstrate that AI can be trusted.

The second gap is the absence of a machine-readable analytics context layer. AI agents need more than access to data. They need to understand what the data means in this organization: what “revenue” means versus “net revenue,” which KPI definition is authoritative for finance versus for the commercial team, how one metric relates to another in a governed hierarchy. Most organizations have this context: it lives in governance documents, in tribal knowledge among senior analysts, in spreadsheets that serve as unofficial business glossaries. None of that is machine-readable. An agent operating without a structured context layer fills the gaps using statistical inference. It produces answers that are probabilistically reasonable but contextually wrong, the enterprise equivalent of a confident hallucination.

The third gap is the lack of a governed execution layer. Even when an agent produces a correct answer, the question for enterprise deployment is whether the action the agent takes as a result is traceable, auditable, and aligned with approved business processes. In regulated industries, the requirement is explicit: every AI-driven decision must carry an auditable trail of what data it was based on, what recommendation it generated, and what action it drove. Without a governed execution layer, agents act without guardrails. Gartner projects that by 2030, 50 percent of AI agent deployment failures will be due to insufficient governance platform runtime enforcement. Organizations that wait to build governance until after agents are deployed will spend significantly more time and cost rebuilding than they would have spent building governance in first.

Agentic analytics runs on top of the existing analytics estate. The quality of what the agent returns is a direct function of the quality of what it has access to. An ungoverned estate, one where ownership is unclear, certifications are absent or decayed, and duplicate reports accumulate across tools, is not a foundation an agent can build trusted outputs on.

Making the estate agent-ready requires four things. Complete inventory across every BI tool in the environment, including secondary platforms that IT does not centrally manage. Certification of analytics assets that establishes which version of each metric is authoritative. Ownership assignment that gives every certified asset an accountable human. And continuous tracking of the estate as it changes, so the governance layer does not decay the moment the initial pass is complete.

The common failure mode is treating this as a one-time project. A team inventories the estate, certifies a set of assets, and marks the stage complete. Agents are then deployed against that certified subset while the ungoverned remainder of the estate continues to accumulate. The agent’s outputs are accurate when they touch the governed portion and unpredictable when they do not. The resulting inconsistency is what drives stakeholders to distrust AI-generated analytics, not because AI doesn’t work, but because the estate underneath it wasn’t ready.

A certified analytics estate is the starting point.

Certification confirms that an asset is authoritative. It does not tell an AI agent what the asset means. For an agent to produce answers that are contextually correct (not just statistically reasonable) it needs machine-readable business context: structured definitions of what each metric means in this organization, how it relates to other metrics, which KPIs it feeds into, who owns its definition, and how it differs across business units where similar terms are used with different meanings.

Many enterprise semantic initiatives struggle to scale and remain current over time. Manually constructing a knowledge base that captures the full semantic structure of an enterprise’s analytics has historically required significant custom build work, often tied to a specific AI tool. When the tool changes, the build starts over. When the business definitions evolve, the knowledge base decays. Organizations end up with AI context that is either too narrow to be useful or too stale to be trusted.

The alternative is automated context generation: deriving the definitions, relationships, and semantic structure that already exist within the organization’s BI metadata, and making that machine-readable without requiring a manual rebuild. The context layer built this way is tied to the estate rather than to any specific AI tool, so it persists as tools change and grows as the estate evolves. Organizations that have implemented this approach see AI deployment timelines compress significantly: the manual semantic build work that previously took months before an AI deployment could begin is replaced by automated derivation from existing metadata. Organizations implementing ZenOptics typically see AI deployment timelines move two to three times faster once the automated context layer is in place.

The third gap is the one that separates organizations that can demo agentic analytics from organizations that can deploy it in regulated enterprise environments.

An agent that can answer questions correctly is useful. An agent whose every action is traceable back to a certified source, whose recommendations are aligned with approved business processes, and whose decisions carry a full audit trail from input to output is deployable in a regulated enterprise. That distinction (between an agent that works and an agent that can be governed) is what most agentic analytics architectures currently lack.

Decision traceability is the mechanism. Every AI-driven action needs to be traceable: which certified KPI triggered the analysis, what business context grounded the recommendation, what process boundaries the agent operated within, and what outcome it drove. Without that trace, the agent operates as a black box. Stakeholders will accept a black box in a demo. They will not accept it when the decision being made affects revenue recognition, regulatory reporting, or supply chain commitments.

Building governed execution requires an architecture that sits between the AI agent and the business process: a layer that enforces approved process boundaries, maps agent actions to trusted analytics sources, and captures the decision lineage needed for audit and compliance. This is not a feature that gets added after deployment. It is the infrastructure that makes deployment possible in the first place.

Production-ready agentic analytics is not a single technology. It is three layers working together.

The first layer is a continuously maintained analytics system of record. Every asset in the BI environment is inventoried, certified, and assigned to an owner. The governance layer is not a one-time exercise but an ongoing operational practice, automated so that as the estate changes the governance state changes with it.

The second layer is a machine-readable analytics context layer. The semantic structure, business definitions, KPI relationships, and ownership hierarchy are derived automatically from the existing BI metadata and structured so that AI agents can consume them reliably. The context layer is maintained as the estate evolves, not rebuilt from scratch each time a new AI use case is introduced.

The third layer is a governed execution environment. AI agents operate within defined process boundaries. Their actions are aligned to approved workflows. Every decision they drive carries a traceable lineage from the certified metric that informed it, through the recommendation it generated, to the outcome it produced. That lineage is the audit trail that makes the agent’s outputs acceptable to compliance, risk, and executive stakeholders.

Organizations that have closed all three gaps report that the shift from pilot to production is less a technology problem than an infrastructure problem. The AI tools are largely ready. The analytics estate, the context layer, and the governed execution environment are where the work is.

Atlas, ZenOptics’s Analytics System of Record, addresses the first gap. Atlas continuously inventories analytics assets across Power BI, Tableau, Looker, and other BI tools, tracking ownership, certification status, and usage without requiring those tools to be replaced. The governance practice is embedded in the platform: the certified estate reflects current reality rather than a point-in-time snapshot, and it stays current as the estate changes.

Nexus, ZenOptics’s AI Context Layer for Analytics, addresses the second gap. Rather than requiring a manual build of business context for each AI deployment, Nexus automatically derives the definitions, KPI relationships, and semantic structure the existing certified estate contains, and makes it machine-readable. The context layer is maintained as the estate evolves, available to any AI tool in the organization’s stack without being rebuilt for each new use case.

Maestro, ZenOptics’s Execution and Agent Control Layer, addresses the third gap. Maestro maps every AI-driven decision to the trusted analytics that informed it, enforces process boundaries, monitors agent behavior, and captures full decision provenance. Every action an agent takes under Maestro is traceable, auditable, and tied to an approved business process: the governance requirement for enterprise AI deployment in regulated industries.

Together, the three layers produce the production-ready foundation agentic analytics requires: a trusted estate (Know), an AI-ready context layer (Understand), and a governed execution environment (Act). For organizations whose analytics AI initiatives have stalled at the pilot stage, the question is typically not which AI agent to choose. It is which of these three layers is missing.

For organizations working through the analytics estate modernization that the first layer requires, analytics modernization in the AI era covers the foundational sequence. For the architecture that makes the full AI-readiness picture clear, the AI-ready analytics enterprise blueprint maps the decision intelligence stack end-to-end.

What is agentic analytics? Agentic analytics is the use of autonomous AI agents to perform multi-step analytical tasks (querying data, synthesizing outputs, making recommendations, and in some cases triggering actions) without requiring a human to drive each step manually. Unlike single-turn AI queries, agentic analytics workflows operate continuously, adapt to new inputs, and can execute across multiple systems. The enterprise challenge is not deploying an agent that can run a task in a demo environment. It is deploying one that produces trusted, governed outputs at production scale.

Why do agentic analytics pilots fail to reach production? Most agentic analytics pilots fail to scale because they are run on top of an unprepared foundation. The three most common gaps: an ungoverned analytics estate where certified and uncertified assets are indistinguishable to an AI agent; the absence of a machine-readable context layer that tells the agent what metrics actually mean in the organization; and no governed execution layer that makes agent actions traceable and auditable. Each gap can be hidden in a controlled pilot and becomes a critical blocker at production scale.

What is the difference between agentic AI and agentic analytics? Agentic AI is the broader category of autonomous AI systems capable of multi-step task execution across domains. Agentic analytics refers specifically to AI agents operating within the enterprise analytics and business intelligence environment: querying BI platforms, interpreting KPIs, synthesizing reports, and driving analytical decisions. The distinction matters because the governance requirements for analytics decisions are distinct from those for general AI tasks: analytics outputs are tied to certified business metrics, regulated reporting, and executive decisions, which requires a specific layer of context and traceability that general-purpose agentic AI frameworks do not provide.

What does an enterprise need before deploying agentic analytics? Three things are required. First, a governed analytics estate: a complete inventory of all BI assets, with certification of authoritative sources and clear ownership assignment, maintained continuously rather than as a point-in-time exercise. Second, a machine-readable analytics context layer: structured business definitions, KPI relationships, and semantic metadata that AI agents can consume without requiring manual build work for each deployment. Third, a governed execution layer: an architecture that enforces approved process boundaries, maps agent actions to trusted analytics, and captures the full decision lineage required for audit and compliance. Enterprises that deploy agentic analytics without all three typically see inconsistent outputs, stakeholder distrust, and eventual project cancellation.

How does agentic analytics differ from traditional BI? Traditional BI is query-on-demand: a user navigates to a dashboard, runs a report, and interprets the output. Agentic analytics is autonomous and continuous: an AI agent monitors data, identifies signals, synthesizes outputs across sources, and can trigger next steps without waiting for a human to initiate the query. The shift from BI to agentic analytics is not just a technology change. It is an operational change. The governance, certification, and context requirements that were good practice in traditional BI become non-negotiable in agentic analytics, because the agent will act on whatever it finds, at scale, without a human reviewing each step.

How long does it take to make an analytics estate production-ready for agentic AI? Timeline depends on the size and complexity of the existing BI estate, the number of tools in scope, and how much automation is applied to inventory and governance. Organizations that approach readiness as a continuous practice rather than a bounded project make faster and more durable progress. Establishing the inventory and certification layers first, with automated tooling to maintain them, compresses the timeline significantly compared to manual governance approaches. Organizations implementing ZenOptics across these layers typically see AI deployment timelines move two to three times faster than manual semantic build approaches, because the context layer is derived automatically from what already exists rather than built from scratch.

By the time an Analytics Director or VP Data is ready to run an enterprise analytics modernization initiative, the conceptual case has already been made. The budget is approved or in negotiation. The four-step framework of inventory, certify, contextualize, and activate has been presented to leadership. The question is no longer why analytics modernization matters. The question is how to execute it, in what order, without letting the initiative become another multi-year governance project that loses momentum before it delivers.

Over the past two years, as AI pressure has accelerated timelines for getting the analytics estate AI-ready, a pattern has emerged in how enterprise modernization efforts play out. The initiative launches with strong intent, produces visible early progress, and then decelerates. Stakeholder attention moves elsewhere. The scope narrows. The completion criteria never quite arrive.

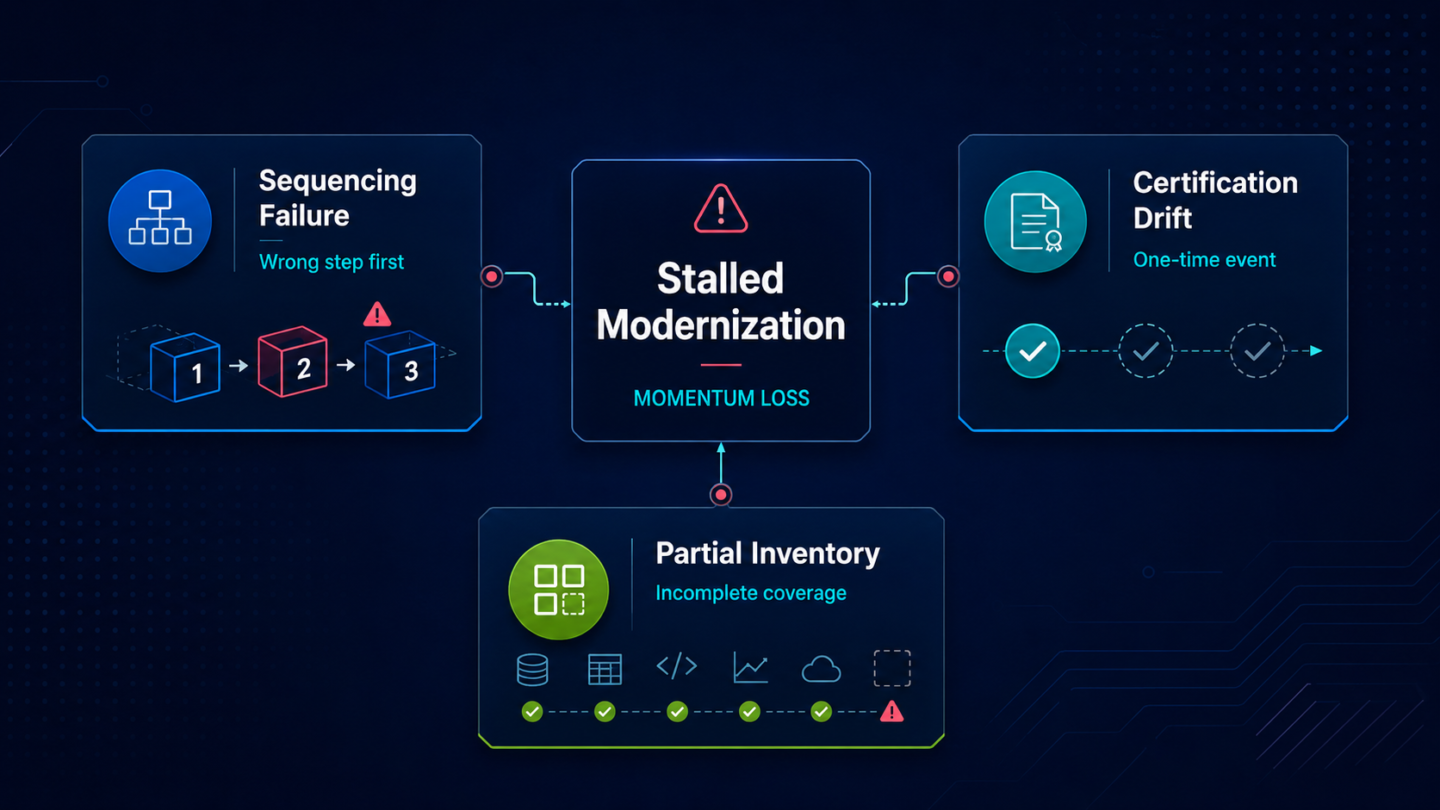

Three failure patterns account for most stalled modernization programs.

The first is sequencing failure: starting with the step that feels most urgent rather than the one that makes the next step possible. Certification launched before inventory is complete produces a certified subset while the rest of the estate continues to undermine trust. Contextualization attempted before certification produces machine-readable metadata attached to assets nobody has validated as authoritative.

The second is treating certification as an event rather than a practice. A team completes a certification pass, marks a set of assets as approved, and moves on. Six months later, those assets have drifted. New versions have been published without going through review. The certification label still exists but no longer means anything.

The third is underestimating the inventory scope. Most BI teams know their primary tools. Complete coverage means every tool: the secondary platforms running departmental reporting, the legacy systems that were never fully deprecated, the content that migrated into the new platform and never got cleaned up. Partial inventory produces partial governance.

The four-step sequence holds for a specific reason: each stage produces a dependency that the next stage requires, and shortcuts collapse the value of what follows.

Inventory is the precondition for certification. You cannot make principled decisions about which assets are authoritative if you do not have a complete picture of what exists. Certifying without full inventory means certifying a subset while the ungoverned portion of the estate continues to produce conflicting outputs.

Certification is the precondition for contextualization. AI tools need business context attached to assets that are known to be trustworthy. Context attached to uncertified assets is noise, not signal. Contextualization built on a partial or drift-prone certification layer will produce the same unreliable AI outputs that motivated the modernization initiative in the first place.

Contextualization is the precondition for activation. AI copilots and agents can only ground their outputs reliably if the business context they are grounding in is structured, current, and attached to certified assets. Activation without that foundation produces AI answers that cannot be traced, verified, or trusted.

The sequence is not a preference. It is the dependency chain that determines whether activation delivers anything the organization can act on.

An inventory that counts only the tools an organization actively manages is incomplete before it is finished. Enterprise BI environments accumulate content from multiple sources: migrations that moved some assets forward and left others behind, departmental reporting built in tools that IT does not centrally govern, dashboards created for projects that ended but never retired.

A complete inventory requires four attributes for every asset: ownership (who is responsible for this report), usage data (is anyone actually using it, and how often), certification status (has this asset been validated as authoritative), and relationships (what other assets depend on this one or share its definitions). Without those attributes, the inventory is a list. A list can support awareness but not governance decisions.

Manual inventory maintenance fails at enterprise scale. The estate changes continuously as teams build, modify, and abandon content across tools. An inventory built as a point-in-time exercise is outdated by the time it is presented to leadership. Sustained modernization requires an automated inventory layer that tracks changes as they happen.

The most common certification failure is treating certification as a labeling exercise. A governance team reviews the inventory, marks a set of assets as certified, and considers the stage complete. The problem is that certification is not a state. It is a practice. A label applied once decays as the estate changes around it.

Durable certification requires three operational components. The first is a defined validation process: who reviews an asset before it is certified, what criteria they apply, and what documentation is required. The second is ownership that is institutional rather than individual: not a name attached to an asset, but a role with accountability for keeping that asset current and valid. The third is a refresh mechanism: a defined cadence or trigger that requires certified assets to be re-reviewed when the business definitions they represent change.

Organizations that treat these three components as ongoing governance infrastructure rather than a one-time project are the ones whose certified estate remains meaningful twelve months after the initial certification pass. The ones that treat certification as a campaign find that the label has degraded by the time they try to build contextualization on top of it.

A certified analytics estate is ready for human governance. It is not automatically ready for AI.

AI tools require business context that is explicit, structured, and attached to the certified assets: what this metric means in this organization, how it differs from superficially similar metrics in other business units, what KPIs it feeds into, and who is accountable for its definition. Most organizations have this context. It exists in documents, in tribal knowledge among senior analysts, and in governance guides that are rarely updated. The problem is that none of that is machine-readable.

Making business context machine-readable has typically required significant manual build work tied to each specific AI tool deployment. A team integrates an AI copilot, then spends weeks documenting the context that tool needs to ground its outputs in the organization’s actual definitions. That work does not transfer when the tool changes or when a new use case is added.

Contextualization as a modernization practice means building and maintaining that business context systematically, attached to the certified estate rather than to any specific AI deployment. The context persists and grows as the estate evolves, rather than being rebuilt from scratch each time a new AI workflow is introduced.

Analytics modernization produces infrastructure rather than visible outputs. That makes progress hard to communicate to leadership and hard to defend when priorities shift. Instrumenting each stage is what keeps the initiative accountable and visible.

At the inventory stage: percentage of BI tools with full asset coverage, total asset count by tool and status, percentage of assets with named ownership, and staleness rate measured as assets not accessed or validated within a defined period.

At the certification stage: percentage of active assets carrying a current certification, percentage with assigned ownership, time-in-review for assets pending certification decisions, and the conflict resolution backlog: how many assets have conflicting definitions that certification has not yet resolved.

At the contextualization stage: percentage of certified assets with structured business definitions, percentage with documented KPI relationships and ownership metadata, and coverage of the certified estate by the context layer.

At the activation stage: AI output traceability rate (what percentage of AI-generated answers can be traced back to a certified, contextualized asset), user confidence in AI-generated outputs, and reduction in escalations from AI tools to the BI team for answer verification.

The work described above is sustainable only if the infrastructure supporting it is automated. Manual inventory maintenance becomes a full-time burden at enterprise scale. Manual certification tracking drifts. Manual context builds are rebuilt from scratch for each new AI deployment. The governance practice collapses under its own weight before it reaches activation.

Atlas, ZenOptics’s Analytics System of Record, handles inventory and certification continuously across Power BI, Tableau, Looker, and other BI tools in the existing environment without requiring those tools to be replaced. Usage data and ownership are tracked as the estate changes, so the inventory reflects current reality rather than a snapshot from six months ago. Certification is managed at the estate level: the governance practice is embedded in the platform rather than maintained through manual process.

Nexus automates contextualization. Rather than requiring a manual build of business context for each AI deployment, Nexus derives the definitions, KPI relationships, and semantic structure the certified estate contains and makes it machine-readable automatically. The context layer is maintained as the estate evolves rather than rebuilt from scratch each time it is needed.

Organizations implementing ZenOptics typically see 30 to 40 percent of their analytics estate comprised of duplicate or conflicting reports, and 20 to 40 percent faster analytics discovery once inventory and governance are in place. Those outcomes arrive before AI is introduced. The AI payoff comes after, because the estate is ready to support it.

For the full framework, including the four-step sequence and its rationale in the context of AI readiness, analytics modernization in the AI era covers the conceptual foundation.

What is enterprise analytics modernization? Enterprise analytics modernization is the process of making an organization’s entire BI estate trusted, governed, and machine-readable across all tools and business domains. It involves inventorying every analytics asset, certifying which assets are authoritative, structuring business context so AI can use those assets reliably, and activating AI workflows on that governed foundation. It is distinct from BI tool migration: modernization addresses the governance and structure of the estate, not the replacement of the tools it runs on.

What is a BI modernization playbook? A BI modernization playbook is a sequenced execution guide for taking an enterprise analytics estate from ungoverned to AI-ready. It defines what each modernization stage requires, the order in which stages must be completed, the failure modes to avoid at each step, and how to measure progress in terms leadership can evaluate. A playbook differs from a framework: a framework defines the stages; a playbook defines how to run them.

How long does enterprise analytics modernization take? Timeline depends on the size and complexity of the existing BI estate, the number of tools in scope, and how much automation is applied to inventory and governance. Organizations that approach modernization as a continuous practice rather than a bounded project make more durable progress: they establish the inventory and certification layers first, show measurable outcomes at each stage, and extend coverage over time rather than attempting full coverage before claiming completion.

What does an analytics governance framework include? An analytics governance framework defines the policies, processes, and ownership structures that keep an analytics estate trustworthy over time. At minimum it includes: an inventory practice that maintains current visibility into all analytics assets, a certification process that establishes and enforces which assets are authoritative, ownership assignment that makes individual teams accountable for specific assets, and a refresh cadence that keeps certification current as the business evolves. Governance frameworks that lack any of these components tend to produce certifications that decay and inventories that go stale.

What is trusted analytics? Trusted analytics describes an analytics estate in which the assets available to users and AI tools have been validated, certified, and assigned to named owners, so that anyone consuming an output knows it comes from a source the organization has designated as authoritative. Trusted analytics is the output of a working certification practice, not a technology feature. It requires ongoing governance to remain meaningful as the estate changes.

How do you measure analytics modernization progress? Meaningful measurement requires instrumentation at each stage. Inventory progress is measured by tool coverage, asset count, ownership rate, and staleness. Certification progress is measured by the percentage of assets certified, ownership coverage, and conflict resolution backlog. Contextualization progress is measured by the percentage of certified assets with structured business definitions and relationship metadata. Activation progress is measured by AI output traceability and reduction in AI-related escalations to the BI team. Without stage-specific metrics, modernization initiatives lose visibility and stakeholder confidence before the governance infrastructure is complete.

Most BI teams treat analytics sprawl as a deferred maintenance problem. The reports keep accumulating, the duplicates keep multiplying, and the response is usually the same: a cleanup project gets added to the backlog, pushed past each planning cycle, and never quite cleared. The assumption is that sprawl is cosmetic: untidy, low-priority, a problem for next quarter. That assumption is increasingly expensive. In environments where AI is being asked to operate on top of the BI estate, the cost of sprawl is not deferred. It arrives the moment an AI tool tries to ground its outputs in an uncharted, ungoverned analytics environment.

Over the past two years, as enterprise AI investment has accelerated and new reporting demands have multiplied, the volume of BI content created in support of pilots, tool rollouts, and cross-functional requests has added to estates that were already managing years of accumulated technical debt. The pattern inside those estates is consistent.

A report gets built for a quarterly business review. Six months later, someone builds a nearly identical version for a different audience. A project ends; its dashboards stay live. A migration happens; the old tool retains content nobody remembered to retire. A team clones a report to make minor customizations and forgets to link it back to the original.

Each of those decisions made sense individually. None of them included a retirement plan. Over time, the result is an estate filled with analytics assets that nobody owns, nobody actively uses, and nobody can confidently retire without worrying about breaking something a downstream user depends on.

Analytics sprawl is the uncontrolled proliferation of duplicate, unused, or conflicting analytics assets across an organization’s BI environment. It is not a sign of negligence. It is the predictable output of BI tools that make creation easy and lifecycle management nearly impossible at scale.

The costs are distributed across three areas, and most of them do not appear on a single budget line.

BI tool licensing is the most visible. Many enterprise BI platforms price on consumption metrics that include content volume, user activity, and storage. An estate padded with orphaned reports and duplicate dashboards inflates those metrics without delivering proportional value. Organizations paying for capacity they are not using are subsidizing the growth of their own sprawl.

Labor cost is less visible and typically larger. When analysts need a report and cannot find it, they build one. Organizations implementing ZenOptics typically see 30 to 40 percent of their analytics estate comprised of duplicate or conflicting reports. That means a significant share of BI production effort goes into recreating work that already exists in another corner of the estate. Discovery time compounds the problem: when searching for an existing asset takes longer than building a new one, the sprawl grows faster.

Trust erosion is the hardest to quantify and the most consequential. When an analyst finds three versions of the pipeline report and cannot determine which is authoritative, the result is decision latency, escalations to BI teams, and a gradual retreat from self-service. The analytics investment produces outputs that stakeholders hedge rather than act on.

Orphaned analytics assets are reports and dashboards with no active owner, no verified usage, and no connection to current business processes. They are common in every multi-tool BI environment, and they accumulate for structural reasons.

BI assets are created on demand, often without an assigned owner or a documented purpose that survives the project they supported. When team structures change, when stakeholders move on, and when business priorities shift, the reports they required remain. Lifecycle management is rarely built into the BI governance process because it is treated as a separate concern from content creation.

The problem with orphaned reports is not just that they take up space. It is that they are indistinguishable from authoritative assets to anyone who does not already know the difference. When an analyst searches for a metric, the orphaned version surfaces alongside the current one. When governance teams try to certify the estate, orphaned assets create noise that slows the process and increases the risk of certifying the wrong version.

The reason sprawl persists is structural. Rationalization requires knowing what exists. Most BI leaders have a reasonable picture of which tools their organization runs. Far fewer have a complete, current picture of every report, dashboard, and KPI definition those tools contain across all platforms simultaneously, with visibility into ownership, usage, and duplication.

Without that inventory, cleanup efforts are limited to what individual teams happen to know about their own corners of the estate. Consolidation conversations stall because nobody can say with confidence what is safe to retire. Migration projects inherit sprawl from the platforms they replace. Each tool refresh moves content forward without clearing the backlog.

The inventory is not a one-time project. The estate changes continuously as teams create, modify, and abandon content. Maintaining a current, cross-tool picture of the analytics environment requires automation rather than periodic manual audits.

Rationalization is the process of moving from an uncharted analytics estate to one that is inventoried, governed, and certified. It requires four things: a complete picture of what exists, usage data to identify what is actively being used, ownership assignment for everything that remains, and a certification process that distinguishes authoritative assets from duplicates and orphans.

Atlas, ZenOptics’s Analytics System of Record, provides this infrastructure across the existing BI environment. It surfaces every analytics asset across tools including Power BI, Tableau, and Looker without requiring those tools to be replaced. Usage data and ownership are tracked continuously, so the inventory stays current rather than decaying between review cycles. Certification is managed at the estate level, not tool by tool.