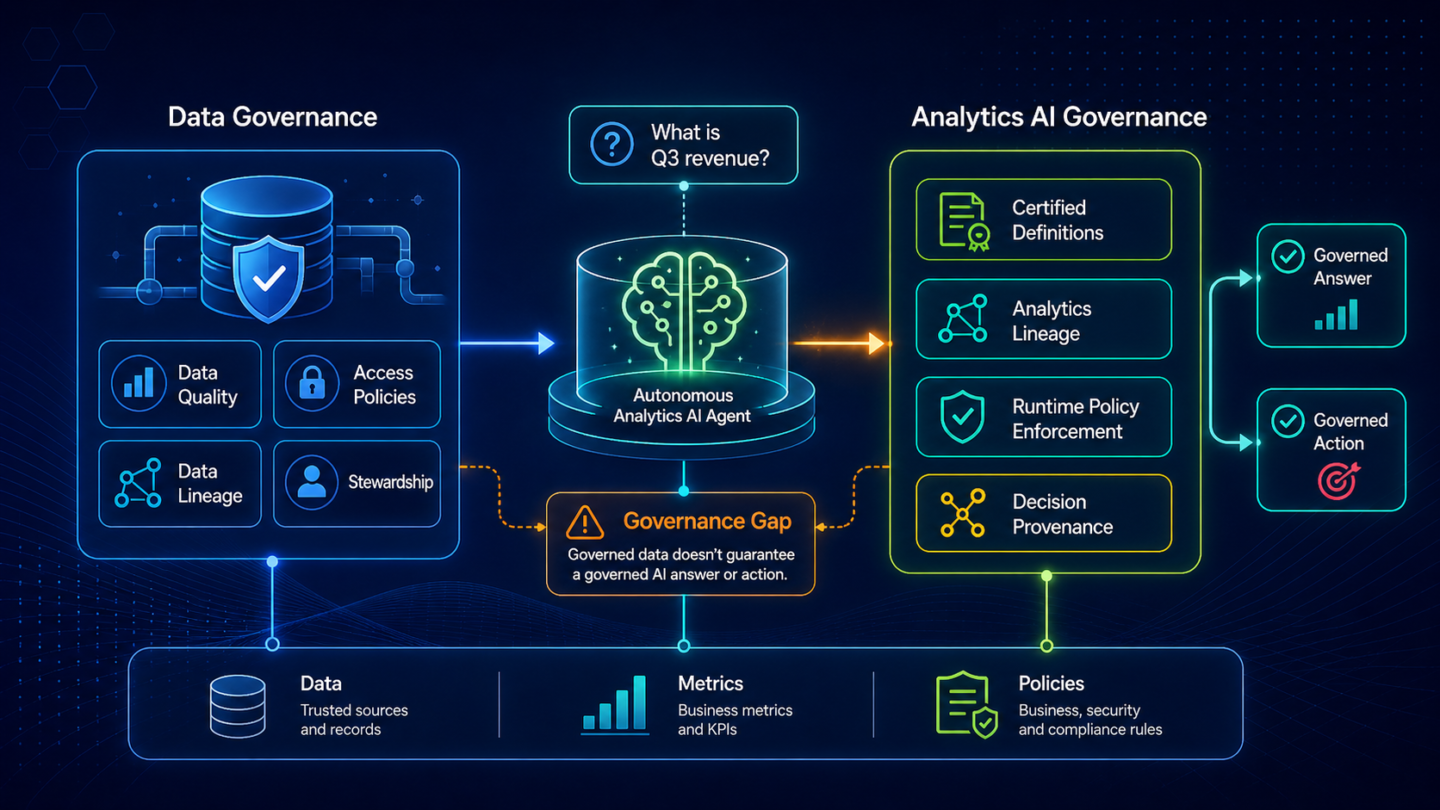

Autonomous analytics AI is moving into enterprise workflows faster than the governance practices most teams rely on. Data governance governs the data; it does not govern what AI says, how it reasons, or how decisions are made on top of that data. That distinction is becoming critical as AI moves from analysis to action.

The Autonomous Analytics AI Governance Gap Is Real

The autonomous analytics AI governance gap is emerging as a new category of risk for CIOs and Chief Data Officers. Most enterprises have built strong data governance over the past decade, but those practices were designed for a world where humans interpreted data and made decisions. AI agents are now generating answers and triggering actions, and governance has not evolved to match that shift.

The numbers behind the gap are concrete. Gartner predicts that 40% of enterprise applications will feature task-specific AI agents by 2026, up from less than 5% in 2025. A second Gartner projection sets the failure rate to expect: by 2030, 50% of AI agent deployment failures will be due to insufficient AI governance platform runtime enforcement for capabilities and multisystem interoperability.

Two trends are colliding. Autonomous analytics AI agents are scaling rapidly across enterprise applications, while the governance required to control their behavior at runtime is still missing. Most organizations fall back on data governance because it is the closest existing discipline, but it does not extend to governing AI-driven decisions.

Why Data Governance Does Not Solve Analytics AI Governance

Most enterprises have mature data governance: data quality programs, master data management, data lineage, data stewardship, classification and access policies. None of this is wrong. All of it is necessary. None of it answers the questions analytics AI governance has to answer.

Data governance defines what the data is and how it should be managed. Analytics AI governance defines what the AI can say, which definitions it must use, and how its decisions are controlled and traced. One governs data. The other governs decisions.

When an autonomous analytics AI agent answers a CFO’s question about Q3 revenue and triggers a downstream action, the data governance stack ensures the data is accurate and traceable. It does not ensure the AI used the certified business definition, nor does it ensure that the decision can be traced back through that definition and governed at the moment of execution.

The gap between "the data is governed" and "the answer is governed" is the gap most enterprises are about to discover the hard way.

Three Failure Modes Data Governance Does Not Catch

Three failure modes consistently appear when autonomous analytics AI is deployed without an analytics-specific governance layer. These are not failures of data governance. They are failures of governing how AI uses and interprets the business.

The first is definition drift. The certified business definition for revenue, churn, or a customer-segment boundary is documented in data governance and stewarded by a domain owner. The AI may use a different one. Data governance has the definition; it does not enforce that the AI grounds in it. The CFO finds out when two AI-generated reports disagree on the same number.

The second is lineage opacity at the decision layer. Data lineage tracks how data flowed from source systems through the pipeline. It does not track how the AI assembled an answer from definitions, metrics, and runtime policies. When an answer is challenged, data governance can show what data the AI saw. It cannot show how the AI reasoned to a specific answer or which certified definitions it grounded on.

The third is the runtime policy gap. Data governance policies are static: ownership, classification, retention, access rules. They are not runtime enforcement of business rules at the moment an analytics AI agent acts. The agent acts. Data governance covers the data underneath. The analytics AI behavior on top of it remains ungoverned in real time.

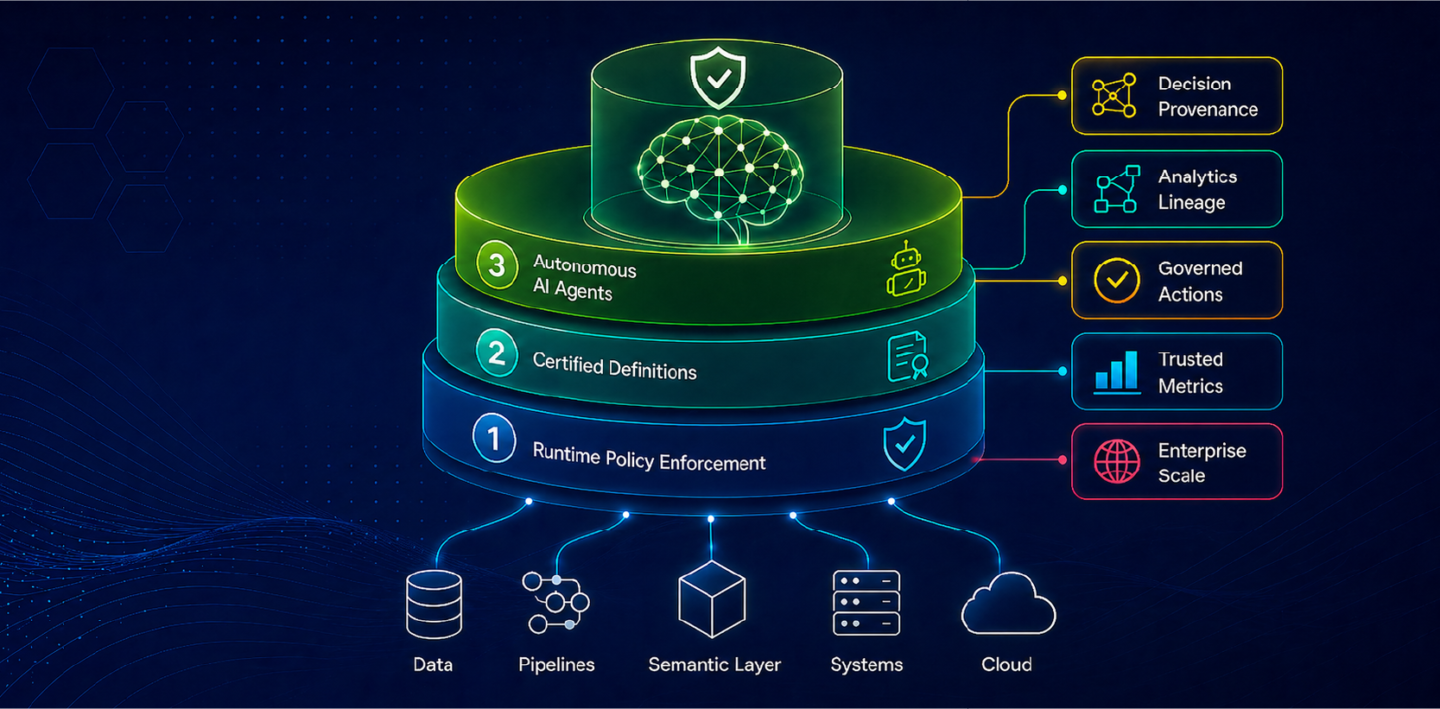

The Runtime Governance Blueprint for Autonomous Analytics AI

Governing autonomous analytics AI at enterprise scale requires three layers, each of which closes one of the failure modes above. ZenOptics calls this The Decision Intelligence Platform. Together, the three layers extend governance from the data layer (where most enterprise practice stops) to the analytics AI layer (where the gap lives).

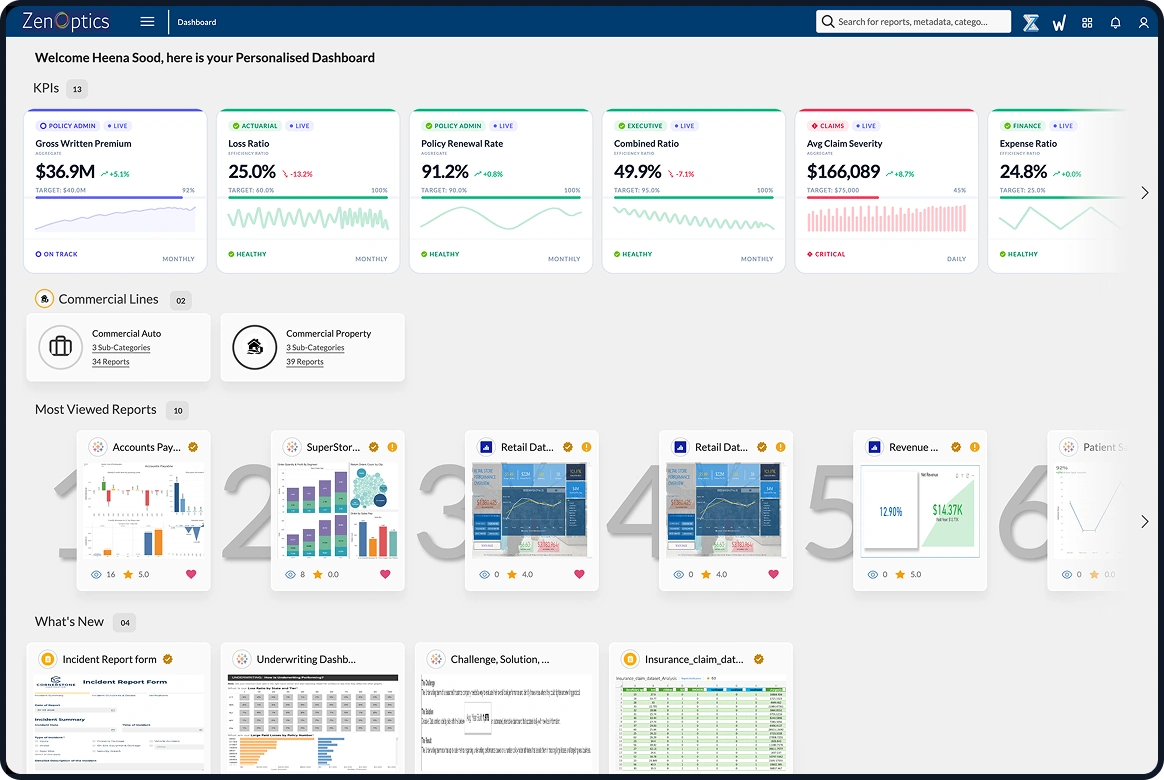

Atlas: Certified Analytics System of Record

Atlas is the analytics system of record. It inventories the BI estate across Tableau, Power BI, Looker, ThoughtSpot, and any combination of them, and it certifies which reports, definitions, and metrics are trusted. Without Atlas, governance has no anchor at the analytics layer. Every governance question begins with "anchored to which version of the truth," and Atlas answers that question at the analytics layer, where data governance does not reach.

Nexus: The Certified Analytics Context Substrate

Nexus is the Analytics Context Layer. It captures certified business definitions, relationships, and trusted metrics, and ensures every AI answer is grounded in that context. This removes definition drift and ensures that every answer is consistent with how the business measures performance.

Maestro: Runtime Policy Enforcement and Decision Provenance

Maestro is the execution and governance layer for autonomous analytics AI. It enforces policy at runtime, captures decision provenance, and ensures every AI-driven action is traceable back to certified definitions and metrics. Governance moves from static documentation to real-time enforcement.

ZenOptics AI, or ZIVA, ZenOptics AI (ZIVA) is the conversational interface that operationalizes these layers. Business users interact through simple questions, while governance, context, and traceability are handled in the underlying architecture.

Organizations implementing ZenOptics typically see analytics AI deployments stand up two to three times faster, with governance built into the architecture rather than added after deployment.

What Governed Autonomous Analytics AI Looks Like in Production

Governed autonomous analytics AI has recognizable signatures in production. Every AI-generated answer carries lineage back to a certified definition. Every action an agent takes is traceable to a runtime policy that permitted it. Every metric an agent grounds an answer in is a certified metric the business has already stood behind. The CFO can sign off on AI-generated numbers because the governance is intrinsic, not because someone manually validated the output.

The inverse is the failure pattern Gartner is projecting. Agents act without runtime policy enforcement, decisions accumulate without traceable lineage at the decision layer, and definition drift goes undetected until two AI-generated reports contradict each other in a board meeting. The data governance stack has done its job correctly. The data is high quality, well-classified, and properly stewarded. The analytics AI governance gap remains the gap that matters.

The path to governed autonomous analytics AI is not a new data discipline. It is a new layer of governance designed for the analytics AI layer specifically, enforced at runtime, integrated with certified definitions and a certified estate.

Where to Go Next

If you have not read the overview yet, start with Analytics AI at Enterprise Scale: Why the Value Gap Is a Context Gap for the full value-realization framework behind this argument.

If your governance concern is preceded by a velocity concern (analytics AI deployments moving slowly to begin with), read Analytics AI Time-to-Value at Enterprise Scale: Why Context Is the Bottleneck.

If you are ready for the full architectural view across Know, Understand, and Act, read Architecting the AI-Ready Analytics Enterprise: The Decision Intelligence Blueprint.

FAQ: Autonomous Analytics AI Governance at Enterprise Scale

What is autonomous analytics AI governance?

Autonomous analytics AI governance is the discipline of governing what analytics AI agents say, do, and decide at runtime. It covers definition certification, decision provenance, lineage at the decision layer, and runtime policy enforcement. It is distinct from data governance, which governs the data itself rather than the AI's behavior on top of it.

Why does data governance not cover autonomous analytics AI?

Data governance governs the data: where it came from, who owns it, how it is classified, what quality it meets, who can access it. Analytics AI governance has to govern the answer: which business definitions the AI used, which policy constrained the action it triggered, and what trace each decision leaves. The two disciplines solve different problems. Mature data governance is necessary for autonomous analytics AI; it is not sufficient.

What is decision provenance in analytics AI?

Decision provenance is the verifiable record of how an analytics AI answer was assembled: which certified definition grounded it, which metric it depended on, which runtime policy permitted the action, and which source data was referenced. Decision provenance turns AI-generated answers into answers a CFO can sign off on, because every decision is traceable from the answer back to the certified business definition.

How does Maestro enforce governance at runtime?

Maestro enforces analytics AI policy at the moment of execution rather than at quarterly review. Policies that used to live in compliance documents become enforceable rules the runtime layer reads and applies before an agent acts. Maestro also captures decision provenance for every AI-generated answer and traces each decision back through the Analytics Context Layer to the certified metric in the analytics estate.

What happens to autonomous analytics AI without runtime governance?

Without runtime governance, autonomous analytics AI agents act without enforcement of business policy, decisions accumulate without traceable lineage at the decision layer, and definition drift goes undetected. Gartner projects that by 2030, 50% of AI agent deployment failures will be tied to insufficient runtime governance enforcement. The cost of the gap is not a data quality breach; it is a steady accumulation of AI-generated decisions the business cannot stand behind.

How is autonomous analytics AI governance different from model risk management?

Model risk management (MRM) governs the model itself: training data lineage, model validation, drift detection, behavior monitoring. Autonomous analytics AI governance covers what the AI says about the business: which certified definitions ground answers, which runtime policies constrain the actions taken on those answers, and what trace each business decision leaves. MRM is necessary for autonomous analytics AI; it does not replace the analytics-specific governance layer where most enterprise governance gaps live in 2026.

Published May 4, 2026